Final Report 2010

Due Date

This report is due on the 22nd of October, 2010 (Friday, Week 11).

Executive Summary

This project further investigates possibilities into the meaning behind the mysterious set of letters discovered with the unidentified body of a man at Somerton beach, South Australia in 1948. The project involves testing and verifying hypothesis put forward by the previous students in 2009 and then incorporating the results to attempt to systematically rule out the type of text the code may be derived from. This is achieved through use of a web crawling device and pattern matching algorithms to run extensive comparison tests on interesting sections of the mystery code and large amounts of raw text.

Aims and Objectives

For decades the written code that is linked to the Somerton Man Mystery has been undecipherable and delivered no solid information about his history or identity. An initialism is an abbreviation of a sentence or phrase and is formed using the first letters of each word. Results from past year projects indicate that the mystery code is likely to be an initialism with the possibility of a substitution cipher being attached to it. The project aims to bring light to the unsolved mystery by tapping into the wealth of information available on the internet. This is done by first verifying the results from past years, and then creating a web crawler and text parser to specifically search for words and patterns similar to the Somerton Man’s code and using statistical analysis to decipher the results we obtain. Of course the ideal goal of this project would be to crack the code, but this is very unlikely and the key sub-goals of creating the web crawler and text parser are the main focus.

Background

Case Background

At around 6.30 am on December 1st 1948 a dead body was discovered at Somerton Beach, here in South Australia, resting against a rock opposite a home for crippled children. The post mortem revealed that the man’s organs were too heavily congested for the cause of death to have been natural. 46 years later, in 1994, forensic science was used to determine that the man had died from digitalis which, at the time of the man’s death, was only accessible with a prescription[1].

The man was found with several possessions including:

- Cigarettes

- Matches

- A metal comb

- Chewing gum

- A railway ticket to Henley Beach

- A bus ticket; and

- A tram ticket

By far the most intriguing of his possessions however is the small piece of paper with the phrase “Tamam Shud”( meaning "ended" or "finished") on it. This piece of paper was identified to be from a book of poems called The Rubaiyat by Omar Khayyam, a famous Persian poet. After an announcement was made by the police the copy of The Rubaiyat to which the piece of paper belonged was produced by a local person who said he had found the book in the back seat of his unlocked car on the 30th of November[1].

The book contained had two things pencilled into it:

- A phone number that lead police to a female named Jestyn. Jestyn was a nurse that denied all knowledge of the dead man.

- A short code of what appeared to be random or encrypted letters.

The Code

The code found in the back of The Rubaiyat revealed a sequence of 40 – 50 letters. It has been dismissed as unsolvable for some time due to the quality of hand writing and the available quantity of letters. The first character on the first and third line looks like an “M” or “W”, and the fifth line’s first character looks like an “I” or a “V”. The second line is crossed out (and is omitted entirely in previous cracking attempts), and there is an “X” above the “O” on the fourth line. Due to the ambiguity of some of these letters and lines, some possibly wrong assumptions could be made as to what is and isn’t a part of the code.

Professional attempts at unlocking this code were largely limited due to the lack of modern techniques and strategies, because they were carried out decades earlier. When the code was analysed by experts in the Australian Department of Defence in 1978, they made the following statements regarding the code:

- There are insufficient symbols to provide a pattern.

- The symbols could be a complex substitute code or the meaningless response to a disturbed mind.

- It is not possible to provide a satisfactory answer.

To this day, both the identity of the Somerton Man and the code has yet to be solved and provides the basis for our research project. Due to the apparent cause of death, a spy theory also began circulating. In 1947, The US Army’s Signal Intelligence Service, as part of Operation Venona, discovered that there had been top secret material leaked from Australia’s Department of External Affairs to the Soviet embassy in Canberra. Three months prior to the death of the Somerton Man, on the 16th of August 1948, an overdose of digitalis was reported as the cause of death for US Assistant Treasury Secretary Harry Dexter White, who had been accused of Soviet espionage under Operation Venona. Due to the possible similar causes of their death, this added to suspicions of the unknown man being a Soviet spy.

Project Background

Probably since the beginning of recognizable human behaviour, coding has been fundamental to all human groups. It was a way to enable communication when ordinary spoken or written language was difficult or impossible. But for as long as communication was needed, concealment, too, seems to be just as common in human societies. Secret languages and gestures are characteristic of many human groups, serving as a means of concealing messages.

A cipher system is the systematic concealment of a message by substituting words or phrases with a different set of characters. They began to be widely used in the 16th century, but they date back as far as 6th century BC[2].

Coding and cipher systems have historically been limited to espionage or warfare, but the recent transformation of communication technology has added new opportunities and challenges for the budding cryptologist. We live in an age, with computerised communication, where the most valuable commodity is code and the second most valuable is the private information that it gives access to. In order to guard this information, we use encryption systems that have to be continually updated as every encryption system is eventually foiled. How to preserve privacy in an electronic age is one of the burning questions of our time[2].

This project focuses mainly on substitution ciphers (codes in which the letters have been replaced with different letters[3]) to determine the secret of the Somerton Man's code. In particular, we focus on the Playfair Cipher, the Vigenere Cipher, and the One-time pad.

Verification of Past Results

In order to develop guidelines that will give direction to the project goals, such as what language and cipher method the code is most likely in, it was important to re-run and analyse the results that were obtained by the previous students. This was achieved in two ways:

- Collection and compilation of random letter samples

- Re-run and verification of past algorithms

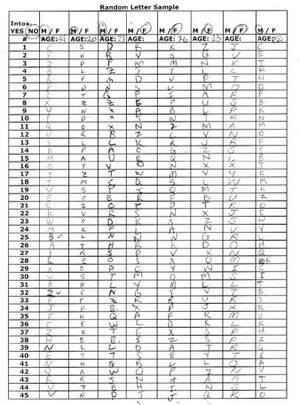

Random Letters

A total of 46 samples (23 sober, 23 intoxicated) were collected from a range of different subjects. This allowed for significant results that were compiled and compared to the letter distribution found in the mystery code. Samples from sober and intoxicated subjects were compiled, just like last year’s students did, in order to also analyse the likelihood of the mystery code being produced randomly by a person under the influence of a poison. Due to moral and ethical issues that arise with actually poisoning people, alcohol was used as a substitute in order to impair the subject’s judgment. A template for collecting random letter samples was created and used to collect 45 random letter samples from a variety of subjects. 45 was chosen as it is roughly the number of letters in the Somerton man code. Details taken from each subject include the following, and can be seen in the completed form to the right.

- Intox. Yes/no - the Physical state of each subject was recorded to keep samples taken from intoxicated subjects separate from those that were, to the best of our knowledge, not under the influence of any substance.

- Age – each subject was required to supply their age for future use

- M/F – the sex of each subject was recorded for future use

As can be seen by the sample to the right it was initially planned to use the age and sex data that has been collected to subdivide the results into different categories in order to conclude whether the letters in the Somerton man code are better correlated to a specific age group or sex. After the results had been compiled it was realised that this information will only prove fruitful when there is a much larger amount of random samples recorded. These extra details have not been utilised in the results of this project.

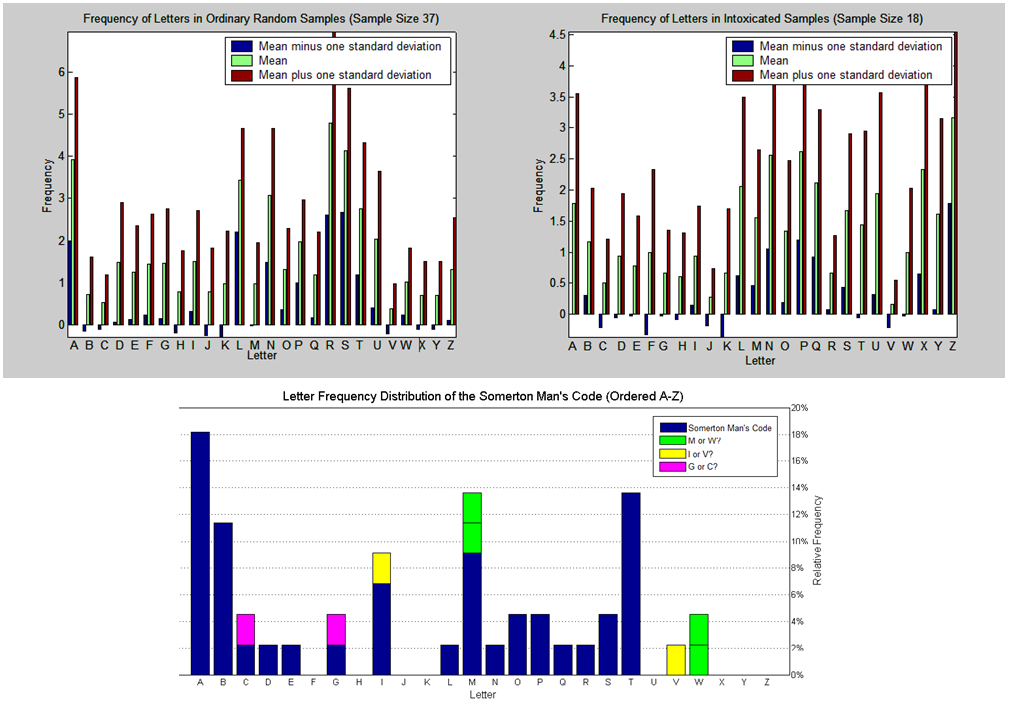

Discussion

As can be seen, the results collected are not identical to the previous student’s results; however they are very different to the graph depicting the code from the Somerton man. It is clear that in both sets of results there is not 1 singular letter that has a frequency less than 1%. This leads us to agree with the previous assumption that the code has not been produced randomly in a sober attempt to deceive or create a diversion.

A very similar result was obtained with the intoxicated samples. This suggests that it is also unlikely that the mystery code has been written in a random fashion by an intoxicated or delusional individual.

The fact that the two different results (this year and last year) show varying results is important. This suggests that the amount of samples collected each time is most likely not enough to give a perfect answer as to whether or not the code has been produced randomly by an individual. Future studies could work on taking a much larger number of samples to see whether the letter distribution varies greatly from those already collected and therefore also obtain a more definitive conclusion.

Another important detail is the small number of letters in the mystery code itself (about 45). If a given letter of the alphabet is only expected to occur, say 1% of the time when produced randomly by a person, then it is highly likely that it would not occur at all in a sample of only 45 letters. If it has been produced randomly this could explain why some letters, such as X, Y, and J are not present in the mystery code at all. However, the high frequency of the letters K and Z in the collected samples suggest that this is not the case.

In summary, from the results obtained, it is feasible to assume that the mystery code has not been produced randomly by somebody in a state of intoxication or as a form of deception however this could be further established with a larger sample base.

Below are the results obtained by the 2009 students.

Verification of Past Algorithms

Last year’s project group attempted to selectively rule out different possibilities of the code’s meaning in order to get a better idea of what the code is. Specifically, they tested the code against transposition ciphers, tested the code as an initialism as well as several different cipher schemes in both English and other different languages.

One of the tests last year’s group made was to test the possibility of the code being a one-time pad. Using the Somerton Man’s code as the cipher text and segments of the Bible as the cipher key, a resultant undeciphered text was obtained. This was also repeated using each of the poems in the Rubaiyat and for all of these cases, the resultant plaintext revealed no sentences or any English words at all. The resultant output for one of the lines in The Rubaiyat is shown below (the rest can be found here).

Ciphertext: MTBIMPANETP Cipherkey: ANDLOTHEHUNTEROFTHEEASTHASCAUGHT Resultant Plaintext: ESICAUMGKLK

Given that the cipher key is longer than the ciphertext in each line, each line was independently deciphered as a Vigenere cipher.

Another test last year’s group made use of was Markov chains to determine the probabilities of the code being a certain language or if it used a specific cipher. In relation to this project, the Markov chain[4] models a line of text as a random process where the next character only depends on a fixed number of previous characters in the text. Due to the complexity of these chains, only the first and second order probabilities were calculated using the following equations:

MP(firstorder) = p(X1)p(X2 | X1)p(X3 | X2)...p(Xn | Xn − 1) MP(secondorder) = p(X1)p(X2 | X1)p(X3 | X2,X1)...p(Xn | Xn − 1,Xn − 2)

For example, the probability of finding the 1st order Markov probability is the probability of getting letter 1, times the probability of getting letter 2 given that the previous letter was letter 1, times the probability of getting letter 3 given that the previous letter was letter 2 and so on.

Because these probabilities are extremely small (of the order 10^-60), the probabilities were normalized using this equation:

HMMER Score = log2(MP/(1/26)^44)

Where the 1/26^44 represents the probability that each letter of the alphabet has a 1/26 chance of occurring in the 44 positions of the code sequence.

This process was used on various texts in which the Playfair and Vigenere Cipher was implemented. The resultant Markov probabilities were very low and it was determined to be very unlikely that these ciphers were used. This process was also used to obtain Markov probabilities for the code being an initialism of different languages by analysing texts of varying languages. It was determined that the code was most likely to be English if it was an initialism[5].

Their results conclude that the Somerton Man’s code is not a one-time pad or a Playfair or Vigenere Cipher, and rather resembles a set of initialisms which may or may not be substituted. From reviewing the previous year's code and attempting to confirm their results, we were able to come to the same conclusion. Based on this, our code attempts to analyse initialisms based in English text and further narrow down what the mystery code could be.

Methodology

The Text Matching Algorithm

Function

The text parser is a piece of code written in java that parses through a text or HTML file and attempts to find specific word and pattern segments within that file. It is divided into several different parts and provides a means in which to parse through a large file directory in a relatively fast manner. The parser makes use of readily available and easy to use packages from the Java API[6]. In particular, it utilises the scanner class from the util package, as well as the File and FileReader class from the IO package.

Implementation

First, there’s a main method that takes the user’s input of which mode to use, the directory to look through, and the pattern, word or initialism to search for. Once these inputs have been taken, the parser then calls a method to find all of the files in the inputted directory as well as recursively calling itself when it finds a folder in the given directory.

Once the file list has been determined the code then calls a different parsing method depending on which mode was chosen in the main method for each file in the list. These are FindExact, which searches for an exact word in a file; FindInitialism which searches for the initial letters of a word; and FindPattern which searches for all initialisms that match a given pattern.

It is important to understand the difference between FindInitialism and FindPattern. FindInitialism takes the initialism as an input (ie “abab”) and then parses through a text and searches for it. On the other hand, FindPattern takes a pattern as an input (ie “#@#@” or “##@#”) and parsers through a text to get every initialism that matches that pattern (so for the case of “#@#@”, FindPattern will find “abab”, “acac”, “xzxz”, etc).

The parsing code works by taking in segments of the text file as a string one line at a time. The string is then observed character by character to determine if a match or a pattern occurs. However for the initialism and pattern search, we are only interested in the initial letters of a word, so specific conditional statements are needed to determine if a character meets a certain criteria before observing it for comparison with the initialism or pattern we are searching for. Generally, any whitespace or punctuation such as periods or commas (but not apostrophes) is considered the beginnings and ends of words, so the subsequent characters are the ones we are interested in.

If the parser does find a match, it produces an output into the command prompt as well as a results text file stating the line and character number of the line where it was initially found. As well, depending on the mode, it prints the pattern or the actual initialism.

After it has gone through all of the files in the directory, a summary of the results is printed. For FindExact, it prints the number of times it was found and in how many files. For FindInitialism, as well as the amount of times it was found, it also prints the expected proportion to find the initialism in the entire set of files as well as the proportion that actually occurred. From this we can determine whether a set of files contained an initialism more or less than expected. Finally, FindPattern prints out an array of which initialisms occurred as well as how often.

Because it is designed to parse through text from HTML files, it is also programmed to ignore HTML code. This has the added advantage of improving accuracy as well as the speed because less text has to be parsed. Additionally, when inputting the word or pattern to look for while using FindExact or FindInitialism, the user can type in an asterisk(*) and the code will consider that letter to be a wild card(any and every letter). While this does not have any real use for this project, it has non-specific general use such as when you do not know how to spell the word you are searching for.

At the moment, there are some limitations to the code. Firstly, the code can only parse through text, HTML and other similar files. It cannot handle complex files such as Microsoft Office documents or PDF files and although this limits the amount of results we can obtain, there are many places that offer eBooks and such in text file format so it is not really a problem. Also, due to how the code was written, FindPattern is currently limited to finding initialisms that span only a single line of text. FindInitialism does not suffer from this limitation; however there are some issues in printing the initialism if it spans 3 or more lines. That is, the initialism is properly detected but not printed properly, so it is only really a problem for sentence accuracy and doesn’t really affect the frequency calculations for the results.

The latest version of the software can be found in the appendix.

The Web Crawler

Function

The basic function of the web crawling portion of the project is to access text on the internet and pass it directly to the pattern matching algorithm. This allows for a reasonably fast access method to large quantities of raw text that can be processed thoroughly and used for statistical analysis.

Implementation

Several different approaches were used to implement the web crawler in order to find method that was both effective and simple to use. After experimenting with open source crawlers available such as Arachnid[7] and JSpider[8] we turned our attention to searching for a simpler solution that could be operated directly from the command prompt. Such a program would allow us to hopefully input a website or list of websites of interest, collect relevant data and then have some control over the pattern matching methods that would be used to produce useful results. After much searching and experimenting I came across an open source crawler called HTTrack[9]. HTTrack was used for the following reasons:

- It is free

- It is simple to use. A GUI version and command line version come with the standard package which allowed for an easy visual experience to become familiar with the program that was easily translated to coded commands.

- It allows full website mirroring. This means that the text from the websites is stored on the computer and can be used both offline and for multiple searches without needing to access and search the internet every time.

- It has a huge amount of customisation options. This allowed for control over such things as search depth (how deep into a website), accessing external websites or just one (avoids jumping to websites that contain irrelevant data), search criteria (only text is downloaded, no images movies or unwanted files that are of no use and waste downloads)

- It abides the Robots Exclusion Protocol (individual access rights that are customised by the owner of each website)

- It has a command prompt option. This allows for a user friendly approach and integration with the pattern matching algorithm.

To keep the whole project user friendly, a batch file was created that follows the following process:

- Takes in a URL or list of URLs that are pre-saved in a text file at a known location on the computer.

- Prompts the user to enter a destination on the computer to store the data retrieved from the website.

- Accesses HTTrack and perform a predetermined search on the provided URL(s).

- Once the website mirroring is complete the program moves to the predetermined location containing the pattern matching code

- Compiles and runs the pattern matching code

For a simple video demonstration of the web crawler working in conjunction with the pattern matching software please see Web Crawler Demo

The demonstration video above shows the use of the file input batch file. There is also a version that will take in URL's directly from the user. All software is available from the appendix.

Results

Exact Initialism

Method

Below is a table of relative frequencies of the first letters of a word in the English language that was taken from a wiki page here[10]. We chose random 3 and 4 character long segments of the Somerton Man’s code and using this table, the expected probability of the segments occurring in regular English text was obtained.

| Letter | Frequency |

|---|---|

| a | 25.602% |

| b | 4.702% |

| c | 3.511% |

| d | 2.670% |

| e | 2.000% |

| f | 3.779% |

| g | 1.950% |

| h | 7.232% |

| i | 6.286% |

| j | 0.631% |

| k | 0.690% |

| l | 2.705% |

| m | 4.374% |

| n | 2.365% |

| o | 6.264% |

| p | 2.545% |

| q | 0.173% |

| r | 1.653% |

| s | 7.755% |

| t | 16.671% |

| u | 1.487% |

| v | 0.619% |

| w | 6.661% |

| x | 0.005% |

| y | 1.620% |

| z | 0.050% |

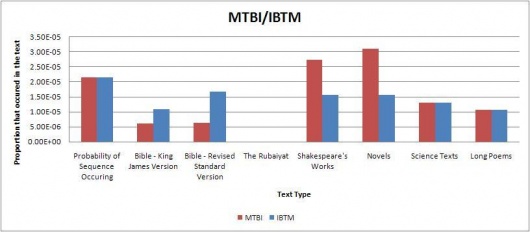

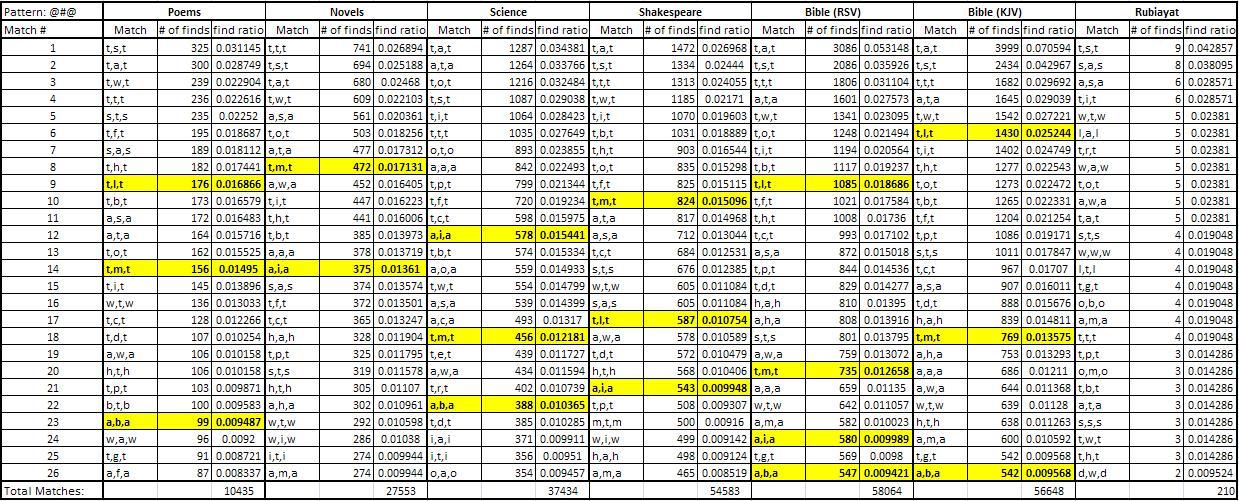

Several different types of text were divided into categories and then tested in groups. The categories include The Bible, The Rubaiyat, Novels, Science Texts, Long Poems, and works by William Shakespeare. The complete list of texts used can be found in the appendix. The expected probability was then compared to the actual proportion that occurred to determine what text type the mystery code could be from using the following formula:

P(actual) = Total Number of Occurrences / (Total Words in Text – n + 1)

Where n is the size of the segment we are looking for.

Using the results, several tables and graphs were generated to simplify viewing. The raw tables and excel sheets can be found in the appendix. Some of the graphs are below. The graphs indicate the proportions of each sequence found in the selected texts; the red bar graphs showing the code sequence found as is in the Somerton Man's code and the blue bar graphs showing the reverse of the sequence (in cases where the sequence is a palindrome, a blue bar graph is displayed for both). The far left bar of each graph shows the expected probability of the sequence occurring in regular English text(found using the table above), and then shows the actual proportions found in each of the text types. This was done in order to easily compare whether the sequence was found more or less than expected for each text type.

The remaining direct initialism graphs can be found on this page.

A raw comparison of actual number of hits can be seen in the tables below.

The table shows the total about of forward and backward initialisms found in each different text, as well as the total amount of words found in the text. This is important because longer texts will obviously have a greater chance of having more initialisms, so in order to properly compare between texts, we have to determine how many initialisms were found relative to the length of the texts (which is the row titled "Ratio Relative to Total"). This is done for forward and backward initialisms separately as well as together.

From the tables, we can see that the text with the greatest amount of initialisms found was the Revised Standard Edition of the Bible. However, this is like to only be the case because the text files in the RSV Bible have far greater words in them compared to the Rubaiyat or poems. We can actually see that relative to the length of the texts tested, The Rubaiyat has the most hits for 3 letter initialisms whilst the science texts has the most hits for the 4 letter initialisms.

Discussion

Our results indicate that most of the code segments occur around the expected frequency for each of the text types, so we can’t really determine if the code belongs to any of the tested text types. However, from inspection of the results, the code is more likely to be divided into 3 word long fragments than 4 word long initialisms. Additionally, we also considered that the code could be backwards. Typically, the results favoured forward segments over the backwards segments, although there were occasions when the backwards segment did appear more often than the forwards segment.

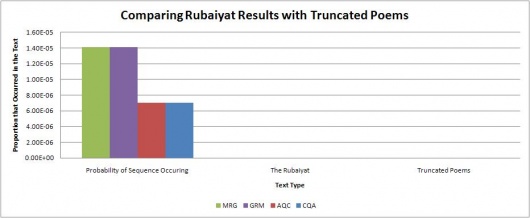

Of particular note was that neither of the 4 or 3 character long code segments appeared much at all in the Rubaiyat. This seems quite highly suspect, and there is a possibility that someone intentionally created the mystery code in such a way that this would happen because is it very unlikely that almost none of the code segments would be found in the Rubaiyat. More testing is probably needed to prove this by testing more poems, and possibly truncating other poems to the same size as the Rubaiyat.

To test if The Rubaiyat results were meaningful or just an artifact of length, the same assorted poetry texts were truncated to a size of 400 to 500 lines down from several thousand (a similar size to The Rubaiyat text file) and then the same tests were run again. The results for this are shown in the graphs below.

The graphs show the expected probability of finding each sequence in English text on the left, followed by the actual proportion that occurred in The Rubaiyat as well as the truncated poetry texts. The graphs show that for the truncated poetry texts, there are some cases where the initialism is not found at all, but more often than not the initialism sequence is found at close to the expected amount. These results indicate that the abnormal results found for The Rubaiyat aren't likely to be an artifact of length and is more likely intentional.

These exact initialism results indicate that the Somerton man’s code could be a substitution cipher of initialisms found in the Rubaiyat, based on the fact that it is incredibly unlikely that none of the tested code segments were found in the Rubaiyat. To further test this theory, we began testing for patterns of initialisms to see if there was any correlation between texts and attempt to narrow down what the code has been substituted from.

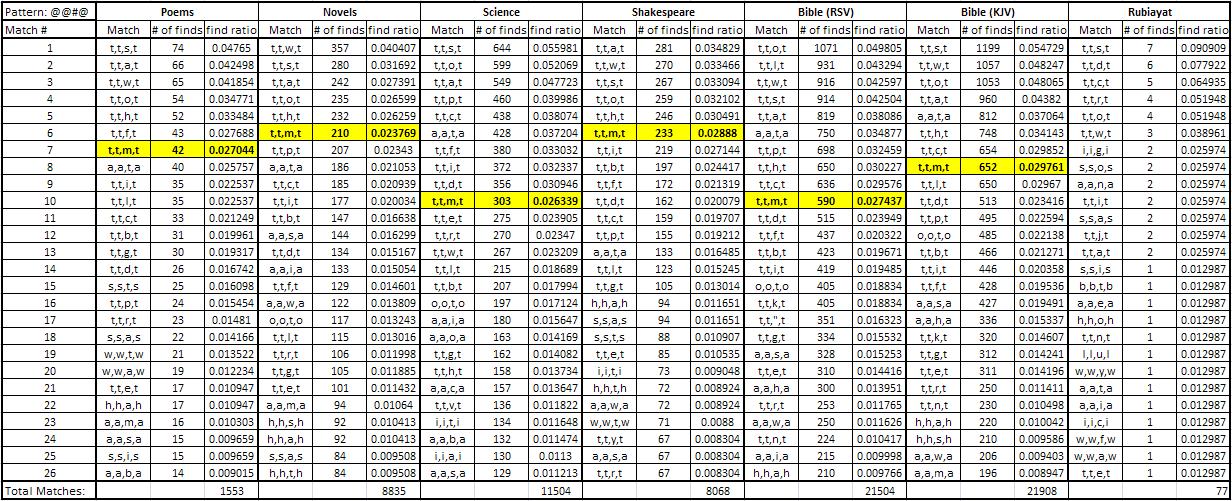

Pattern Initialism

The Pattern matching algorithm was utilised in a very similar manner to the Initialism algorithm discussed above. Patterns that contain two symbols and appear in the mystery code were highlighted and then used as a basis for a wildcard pattern search on the different types of English texts.

Pattern Selection

As shown in the following table the letters of the mystery code were spread out in order to single out the patterns that actually occur. The different pattern combinations can be seen highlighted in yellow and are broken down further in the next table.

As shown, patterns that occur both horizontally and vertically were used for the testing. It has since been decided that the vertical patterns are unlikely to be of any interest as a simple inspection of the actual mystery code shows very little structure or consistency in a vertical manner compared to the horizontal.

| M | R | G | O | A | B | A | B | D | ||||

| M | T | B | I | M | P | A | N | E | T | P | ||

| M | L | I | A | B | O | A | I | A | Q | C | ||

| I | T | T | M | T | S | A | M | S | T | G | A | B |

The highlighted letters are all, in some form, part of a two symbol pattern of length 3 or 4. As shown below, patterns occurring both forwards (left to right) and backwards (right to left) were used for the testing. This assumes that there is a possibility that the code was written backwards.

From these patterns the individual wildcard code is derived, giving the patterns of interest that were used on the different English texts. For example where ABAB occurs in the mystery code, a pattern of @#@# was searched to reveal any combination of letters that match the ‘pattern’ of ABAB.

A full breakdown of the patterns of interest and their associated combination can be seen in the following table.

| Interesting 4 Symbol Sequences | Interesting 3 Symbol Sequences |

|---|---|

| Horizontal | |

| ABAB - @#@# | ABA - @#@ |

| TTMT - @@#@ | AIA - @#@ |

| TMTT - @#@@ | ITT - @## |

| TTI - @@# | |

| TTM - @@# | |

| MTT - @## | |

| TMT - @#@ | |

| BAB - @#@ | |

| Vertical | |

| MMMI - @@@# | TLT - @#@ |

| IMMM - @### | MMM - @@@ |

| AAAA - @@@@ | AAA = @@@ |

| TQT - @#@ | |

| MMI - @@# | |

| IMM - @## | |

| Patterns of Interest | |

| @#@# | @#@ |

| @@#@ | @## |

| @#@@ | @@# |

| @@@# | @@@ |

| @### | |

| @@@@ | |

Each of the pattern combinations found in the mystery code was then used to search through different types of text so that any differences between the initial letters used in the different text types would be clear. The result of these tests would then hopefully suggest the most likely origin of the mystery code if it is in fact initialism.

The types of texts used were:

- Poems (a variety of long and short poems from a large variety of poets/archives)

- Novels

- Science texts (a selection of textbooks - chemistry, maths and physics)

- Shakespeare (entire collection)

- The Bible (separately tested the King James and Revised Standard Versions)

- Rubaiyat (book of poems associated with the murder itself)

Discussion

The results, for each pattern possibility, have been tabulated and can be found in the appendix. A few example results tables are shown below. The highlighted cells depict the patterns that are an exact match to the pattern sourced from the mystery code.

The first thing that is apparent from the results is the similarities between the different types of text. This suggests that the general occurrence of the order of initial letters in English is independent of the source. That is, it does not really matter where the raw text is from as it is all English and will produce very similar results.

The second interesting point is the highlighted results that relate to the letters taken directly from the mystery code. Having these results occur in the top list of results implies that it is still technically possible that the code is in fact an initialism without substitution. However, apart from the patterns of one letter (@@@@ and @@@), there is a very large spread of results with the top result usually only about 5% of the total amount of matches found. The top 26 matches are shown for each search however in the full list some patterns returned results with up to 500 different match variations. This suggests that it is highly likely that if the mystery code is in fact initialism, there is a good chance that it is also using some type of substitution method. That is, each letter is actually representing a different letter.

The very low amounts of matches found that are a direct link from the book of Rubaiyat and the mystery code is also important. This book, linked so closely to the murder, would be the most obvious source of the code. It is clear from the results that if the code has been made as an initialism from the Rubaiyat then it is definitely also incorporating substitution.

Further Research

Our results were supposed to attempt to find the type of text that the Somerton man’s code is from; however there wasn’t any single text type with much more greater than expected occurrences. In fact, other than The Rubaiyat, there were no probabilistic irregularities found. In order to determine if this is significant to unraveling the Somerton Man’s code, further testing is required. This can be done by running the text parser for more code segments, as well as choosing other texts to obtain results on.

Additionally, further testing with poems is needed. Only a small amount of the poem texts tested match the same format as the Rubaiyat (four lines per poem), so tests of other four line poems are needed to determine if the exact initialism results are specific to the Rubaiyat or all/most poems of a similar size. Another method would be to truncate the poem texts to the same size. The Rubaiyat is about 84 poems of 4 lines each, so we can truncate the other poem texts to either 84 poems, or possibly 300-400 lines and then determine if there are any differences in the results. While this truncation method has been done for the tested set of poetry texts, the results can be more accurate with more poetry texts.

Also, at the moment, our results assume that all of the words in the text and in the code are independent of each other. We know this isn’t actually the case and that there is some sort of dependence between initial letters of text. Our results could be expanded by taking this into account and using Markov chains similar to last year’s project group[11].

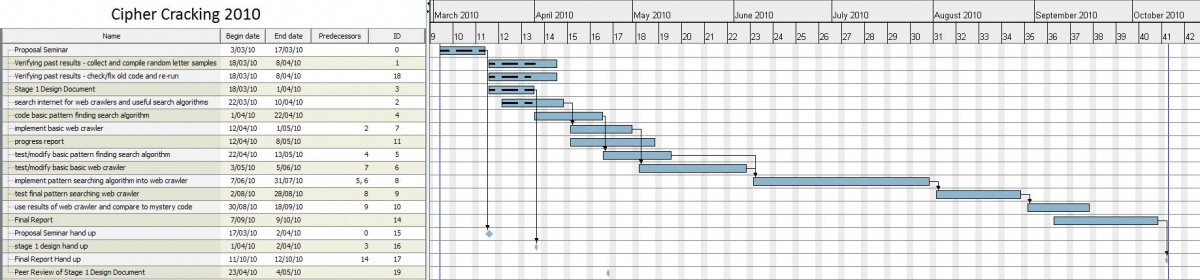

Project Management

Work Allocation and Structure

To make the most efficient use of time the work load was initially broken into two different streams. This allowed us to work in parallel for a significant amount of the project. Working in parallel on the initial software components also allowed for much more efficient use of our time by bypassing the need for any serious version control. The actual tasks performed where almost identical to the following diagram with a small exception in producing the wild card pattern matching algorithm where both members of the group contributed.

Schedule

To keep track of the project schedule a Gantt chart was used. The project ran very close to the estimated schedule and there were no changes made to the Gantt chart throughout the project. As the exact due dates for the deliverables were not known at the start of the project, they are not shown correctly however all deliverables were met on time.

Risk Management

The risks associated with the project were low because the project was majorly software based and there were no issues that arose. Since we did not need any hardware, the project was not impacted with any long term delays. The table below was developed to highlight some of the most likely risks that could have become an issue and their estimated impact levels on the project progression.

| Risk | Probability | Impact | Comments |

|---|---|---|---|

| Project member falls sick | 4 | 6 | Could leave 1 member with a huge amount of work to complete |

| Unable to code pattern matching algorithm | 2 | 8 | Additional time might have to be spent, delaying the project |

| Unable to contact project supervisors | 2 | 6 | If it is at a critical time problems may arise |

| Crawlers not effective at parsing data | 3 | 5 | A different web crawler may have to be used, delaying the project |

| Run out of finance | 1 | 7 | Has the potential to be a problem if software needs to be purchased |

| Somebody else cracks the code | .009 | 5 | The project may lose its "x" factor but the web crawler will still be useful |

Budget

Once again, as the project was highly software based the expenses for the project were very low. Although the project had up to $500 available, there were very little expenses and the project fell under budget by a large amount. The total expenses for the project are:

- The Secrets of Codes: Understanding the World of Hidden Messages by Paul Lunde - $50

Summary and Conclusions

The main aim of the project was to create a web crawler and text parsing algorithm for use in this project as well as general non-specific use. We believe that we were quite successful in this element because our code is quite capable of parsing through a single file or several directories and storing the results into another text file. The code can be improved by attempting to remove some of the limitations stated earlier.

This project attempted to prove that the Somerton Man's code belonged to a specific English text type by testing the frequency of initialism occurring; however, based on our testing, we could not find any significant differences in frequency over any of the text types. The results found show that the text type with the greatest amount of initialisms found was the Revised Standard Version of the Bible, however this is only due to the length of the text; the text type with the greatest amount of initialisms found relative to it's length was The Rubaiyat for 3 letter initialisms and science texts for 4 letter initialisms. It is currently undetermined whether this has any significance or not.

Perhaps the most promising lead is the lack of results found in The Rubaiyat; it seems incredibly unlikely that such small frequency would occur unless it was intentional. Although it seems highly suspect, more testing is needed to determine if this is significant or not. As stated earlier, testing poetry texts truncated to the same size as The Rubaiyat would prove very beneficial.

Due to the sheer number of different initialisms, the pattern initialism results were not able to be completely analysed and we were unable to determine what kind of substitution cipher, if any, was being used. To further this area of the project, we need to analyse the greatest occurring initialisms for each pattern and cross reference them with every other pattern to narrow down the possible substitutions used. Due to time constraints, this was not able to be completed.

Our results suggest that the Somerton Man's code is most likely an initialism taken from The Rubaiyat and involves some type of cipher. Although this cannot be absolutely confirmed, we believe that this is currently the best theory surrounding the mystery of the Somerton Man's code.

Appendix

Texts Used in Analysis

As stated earlier, the texts used for testing were divided into several different categories in order to analyse the frequencies of the Somerton man's code segments appearing within the text and observe any irregularities. The majority of the texts were obtained from Project Gutenberg[12] as well as other non-specific websites. The complete list of texts used is below, and can be downloaded here.

The Rubaiyat

- The Rubaiyat of Omar Khayyam

The Bible

- King James Version

- Revised Standard Version

Assorted Poems

- Pastoral Poems by Nicholas Breton, Selected Poetry by George Wither, and Pastoral Poetry by William Browne (of Tavistock)

- Revised Edition of Poems by Bill o’th’ Hoylus End

- Poems Series One By Emily Dickinson

- The Kings and Queens of England with Other Poems by Mary Ann H. T. Bigelow

- The World's Best Poetry, Volume 3: Sorrow and Consolation

Novels

- 1984 by George Orwell

- Alice in Wonderland by Lewis Carroll

- Dracula by Bram Stoker

- Pride and Prejudice by Jane Austen

- The Time Machine by HG Wells

Science Texts

- An Elementary Study of Chemistry by William McPherson & William Edwards Henderson

- Elements of Agricultural Chemistry by Thomas Anderson

- The Chemistry of Plant Life by Roscoe Wilfred Thatcher

- The New Physics and Its Evolution by Lucien Poincare

- Newton's Principia - The Mathematical Principles of Natural Philosophy by Isaac Newton

Works by William Shakespeare

- The Entire Collected Works of William Shakespeare

Software

The latest version of the pattern matching algorithm and batch file can be downloaded here.

The open source web crawler used was HTTrack and can be found here.

Results Files

The random letter collection results can be found here.

The exact initialism results can be found here. Inside there are the raw text file results obtained from the text parser, as well as several excel sheets with tables and graphs.

The pattern initialism results can be found here.

Miscellaneous

A simple video demonstration of the web crawler and pattern matching software can be seen here.

A blank template of the random letter sample sheet can be found here.

References

- ↑ 1.0 1.1 http://en.wikipedia.org/wiki/Taman_Shud_Case

- ↑ 2.0 2.1 The Secrets of Codes: Understanding the World of Hidden Messages by Paul Lunde

- ↑ http://en.wikipedia.org/wiki/Substitution_cipher

- ↑ http://en.wikipedia.org/wiki/Markov_chain

- ↑ https://www.eleceng.adelaide.edu.au/personal/dabbott/wiki/index.php/Final_report_2009:_Who_killed_the_Somerton_man%3F#Initial_Letters_of_a_sentence

- ↑ http://java.sun.com/j2se/1.5.0/docs/api/ The Java API

- ↑ http://arachnid.sourceforge.net/ The Arachnid Web Crawler

- ↑ http://j-spider.sourceforge.net/ The JSpider Web Crawler

- ↑ http://www.httrack.com/

- ↑ http://en.wikipedia.org/wiki/Letter_frequency#Relative_frequencies_of_the_first_letters_of_a_word_in_the_English_language

- ↑ https://www.eleceng.adelaide.edu.au/personal/dabbott/wiki/index.php/Markov_models

- ↑ http://www.gutenberg.org/wiki/Main_Page

See Also

- Direct Initialism Graphs

- Progress Report 2010

- Stage 1 Design Document 2010

- Cipher Cracking 2010

- Final report 2009: Who killed the Somerton man?

- Timeline of the Taman Shud Case

- List of people connected to the Taman Shud Case

- List of facts on the Taman Shud Case that are often misreported

- Structuring the Taman Shud code cracking process

- List of facts we do know about the Somerton Man