Critical design review 2010: Who wrote the Letter to the Hebrews?

Supervisors

Collaborators

Students

Due date

This assignment is due by Semester 2, Week 8, 2010.

Abstract

The author of the letter to Hebrews has been wavering for over 1,800 years. Numerous authorship techniques have been applied to the text but results have often been inconclusive or have only been able to show that it is most likely that Paul of Tarsus or Apostle Paul was not the author of Hebrews. In this project, the team aims to further enhance three existing extraction algorithms, namely Function Word Analysis (FWA), Word Recurrence Interval (WRI) and Trigram Markvo, in order to identify the author of the letter. In past research, the results of the algorithms are compared statistically using Principal Component Analysis (PCA) or Linear Discriminant Analysis (LDA). In this project, the team aims to develop a classification model using a Support Vector Machine (SVM), which has been demonstrated to be exceedingly accurate and be able to contribute additional evidence regarding the author of the letter to Hebrews.

Project Aims

The project aims to solve the controversy “Who wrote the Letter to Hebrews?” The team intends to further enhance three extraction algorithms, Function Word Analysis, Word Recurrence Interval (WRI) and Trigram Markvo, which have been shown to produce relatively satisfactory results, as compared to data compression, in terms of authorship detection and compare its effectiveness to existing algorithms. The team plans to utilize a Support Vector Machine (SVM) to develop a classification model that would be able to accurately classify a disputed text to its author using a database of undisputed texts. With this model, the team would be able to present an accurate hypothesis to the controversy “Who wrote the letter to Hebrew?” In addition, if time permits, the team would aim to verify the authorship of other controversial texts such as The Federalist Paper and the works of Shakespeares. Furthermore, the team would like to further develop our algorithm to applications such as source code plagiarism detection and future search engines.

Background to the project

Background to the problem of the authorship of the Letter to the Hebrews

In a book written by Rev Dr Hooykaas, he said that “Fourteen epistles are said to be Paul's; but we must at once strike off one, namely, that to the Hebrews, which does not bear his name at all.” It has being about 1,800 years since the letter to the Hebrews were written and the author of the letter still remains ambiguous.

In past years, scholars have often analyse the letter of Hebrews and compare the style of writing in the letter to the other epistle that have been undisputedly written by Paul. Authors have both conscious and subconscious aspect to their writing. While the conscious aspect can be altered to suit the type of article that is being written, the subconscious aspect forms a unique fingerprint of a writer’s work. But this has also raise many issues like the possibility that although Paul was the original author of the letter which might have be written in Hebrew but Luke translated Paul’s letter to Greek resulting in Luke’s style in the letter to the Hebrews [10]. In addition, a study conducted by Holmes, shown that sentence length and word length often given incorrect results. [9]

A different approach is taken in determining the authorship of the letter. The aim of this project is to analyze the letter base on statistic measures such as Function Word Analysis, Word Recurrence Interval and Trigram Markvo, and produce strong statistical evidence in assisting the team in determining the author of the letter to the Hebrews.

Background to authorship detection

In the past centuries, several researchers have spent hard work on authorship detection resulting in a vast of number and diversity. The earliest study in this subject was conducted by Mr. Mendenhall[1] who uses characteristic curve of composition. However it was heavily criticised by Florence in 1904 that the technique applied was principally controlled by the language in which the text is written.

In the year of 1983, Smith[2] uses stylometrics measurements, which consist of measurements such as the word count, sentence count and number of punctuation marks in a text. However it was also discovered by him that it gave him very incorrect results.

In the year 1990, Hilton[3] uses word pattern ratios on ‘the book of Mormon’ such as the number of times ‘a’ appears as the first word of sentence divided by the number of sentence in a paragraph. His results show some very interesting conclusion regarding authorship attribution.

In the year 1995, Holmes and Forsyth[4] use Principal Component Analysis (PCA) and Linear Discriminant Analysis (LDA) for the classification and vocabulary richness and word frequency analysis for data-preparation. From their research, it is advisable to use large sets of common words (such as >=50) if the technique word frequency analysis is used to obtain meaningful results.

In the year of 2001, Stamatatos[5] et al. made a new method that is to use text categorization using genres or authors using Natural language processing. They reached to a conclusion that the method achieved relatively high classification accuracy. They attempted to take advantage of existing NLP tools by using analysis-level style markers that provide useful stylistic information.

In the year of 2002, Baayen[6] uses PCA and LDA on student’s essays on the most frequent function word. He found out that a simple inclusion of punctuation marks in the analysis enhanced classification accuracy, which says that punctuation marks may prove to be effective style markers.

In the year of 2003, Baayen[7] collaborated with Juola and uses a method called cross-Entropy in authorship attribution. It measures the unpredictability of a given event, given a specific model of events and expectations.

In the year of 2004, Sabordo[8] uses data compression technique on the letter of Hebrew, which shows poor results of the method.

In the year 2005, Putnins et al.[9] used Trigram Markov model with Multiple Discriminant Analysis and based on the results, he showed that it gives very high accuracy rate in authorship detection.

(Review some of the classical methods and their weaknesses. Detail what we are going to do and highlight we are going to avoid style and only use statisitcal methods).

Significance of this project

Since the invention of internet in the 1960s, it has developed at such a rapid rate that it has changed people’s lifestyle throughout the world. An increasing amount of economic and intellectual activities involve global internet as a medium and the scrutiny of the authenticity of documents have become more challenging. In addition, the data we have is often vast and noisy, implying that it might be imprecise and the data structure is complex. A purely statistical technique would not produce satisfactory result therefore data mining is developed. It is a technique that filters out noise data and extracts useful and relevant information hidden within large volumes of data.

Data mining technique can perform well in a wide range of applications. Three major fields of applications include plagiarism analysis, authorship identification and near-duplicate detection. In plagiarism analysis, the technique is able to determine the similarities between objects, such as source codes, articles, music and hence provides statistical plagiarism evidence. Authorship identification is another important application whereby many existing controversies such as Shakespeare authorship in question and The Letter to Hebrews may be unraveled. Data mining is not limited to text documents, but might also be able to apply to determine the author of other types of documents like paintings and software. Near-duplicate detection assists the development of next generation search engines. In addition, the number of duplicate pages and mirror sites are rising as the frequency of internet usage increases, leading to larger demand on index storage space for search engines. The current problem is that it is time consuming and result in imprecise information. In this case, data mining aims to select useful amount of data from document for search engine categorizing. An additional issue with World Wide Web is the requirements for large size text search. With data mining technique, it is feasible to use full text search in place of a few hundreds of carefully selected characteristic words. In addition, data mining is widely used in biology and forensics purpose such as DNA and fingerprint analysis.

Preliminary work and results carried out so far

Approach and methodology for remaining part of project

Project requirements

The project team hopes to build a classification model using a Support Vector Machine (SVM) with either a chosen extraction algorithm or possibility a combination of algorithms that would be able to associate a disputed text to its original author.

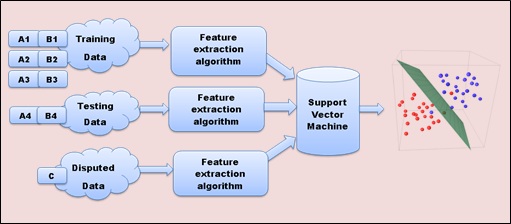

A disputed text is an article or any piece of writing whose authorship is uncertain. So for example if we have a disputed text, Text C, that is suggested to link to either author A or author B, we would build up our classification model by entering a set of training data to SVM to build up our classification model. Our set of training data consists of several texts that have been undisputedly claimed to be written by either author A or B.

As shown in Figure 1, for example, Texts A1 to A3 written by author A and Texts B1 to B3 written by author B are used as our training data. In actual fact, our training data would vary depending on the accuracy necessary. Also shown, the testing data set, Text A4 and Text B4, written by their respectively author, is used to determine the accuracy of our classification model. And likewise, in actual fact, our testing data set would be larger than a sample size of 1. If Text A4 and Text B4 are classified correctly, into their respective authors, we can safety assume that our classification model is functioning accurately. Thereafter, we enter our disputed text, Text C, into our extraction algorithm, followed by our classification model to determine the actual author. If Text C was not able to be classified under author A or author B, we can either increase our set of training data to build up our classification model further or we can assume that Text C was not written by neither author A or author B. In addition, for the classification model to function as accurately as possible, it would be worthwhile to have training and testing sets that is as large as possible.

Three Data Extraction Algorithms

The project team has come to a decision to use 3 types of data extraction algorithms that are to be developed by the team themselves. These data extraction algorithms are chosen due to their high accuracy of authorship identification done by past researchers and also due to their own advantages that will be describe below.

Function Word Analysis

Function words are prepositions, pronouns, auxiliary verbs, conjunctions, grammatical articles or particles and are commonly said to have little lexical or contain ambiguous meaning. It is used to express grammatical relationships with other words that are contained in a sentence or exhibit the frame of mind of the author.

Function word frequency analysis is a method whereby the number of occurrence of function words in a set of undisputed texts is analyzed and compared to the disputed text. Function word frequency analysis has been proven to be a reliable method that can be used to distinguish between authors. It can be applied to different languages and it is genre independent [9] thus allowing a wider range of application.

Word Recurrence Interval (WRI)

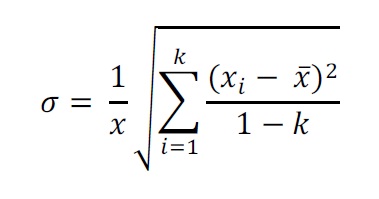

Word Recurrence Interval (WRI) is the number of words in between successive occurrences of keyword. As an example, given the text “the boy writes his report on the paper”, the word recurrence interval for the keyword “the” is five. Using this definition, a set of keywords is obtained based on the number of times a keyword is used in the text. Upon having a set of keywords, the scaled standard deviation of this set of keyword is obtained using the formula.

)

We decided to use this technique because its main purpose is to eliminate the dependency on word frequency to characterize word distributions thus having a more statistical approach to the text. More importantly, it allows us to make comparison between different texts or within the same text. The process of finding the scaled standard deviation is repeated for each keyword, resulting in a set of scaled standard deviations corresponding to each keyword. From these scaled standard deviations, it can be saved as a vector and is input into the SVM for data classification and further analysis.

To implement this technique in this project, the data extraction using this technique will be implemented using Java. After obtaining a set of scaled standard deviation of WRI, this will be used as an input into Matlab which uses SVM for data classification.

Trigram Markov Chain

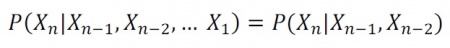

A Markov Chain is defined by a set of states and transitions. It has the memoryless property which means occurrence of future states does not depend upon past states, but only current one. Trigram Markov Chain is a particular example in this class. It indicates the occurrence of the coming state only depends on its previous two states.

In the context of authorship attribution, Trigram Markov Chain assumes that probability of coming letter or word (or character in some other languages) only relates with two letters or words before it. In mathematics, it is represented as:

)

Hence, it is able to form a vector which contains states and transitions information calculated based on each text document. We believe that texts written by the same author may have similar vectors. Hence, these characteristic vectors can then be fed into SVM for classification.

This algorithm will be implemented using Java, the result states and transitions information will be stored in Java output files. In later stage, they will be used as input data for SVM classifier implemented using Matlab.

Project Approach

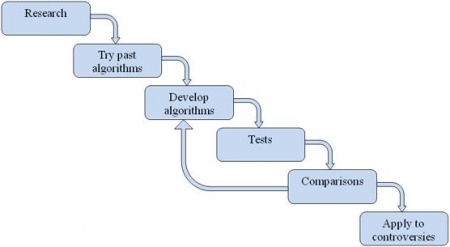

The project team have to make a decision based on several different types of approached towards the project. With much consideration and base on the advantages of the system, the team has decided on taking the waterfall system approach towards the project. The waterfall model was chosen largely due to the fact that it provides the team a step-by-step approach to meet the project requirements without complicating the process by limiting the number of re-iteration of each part in the process. The team have brainstorm and finalized on the waterfall model as shown in Figure 4.

Base on the figure above, the team have developed 6 parts in the waterfall model and each part will need to be completed before proceeding to the next part. Additionally, a brief discussion on issues that may arise and risks for each part is summarise in Table 1.

| Part | Issues or risks that may arise |

|---|---|

| Research | Might spend too long in researching causing delay in project |

| Try past algorithms | Algorithms might need some time to reprogram causing delay and need to carefully verify the implementation that yields comparable results similar to past researches result |

| Develop algorithms | Experience difficulty in programming causing road block |

| Tests | Applying too much tests on different texts |

| Comparisons | Might obtain incorrect results which may not be consistent towards past research results |

| Apply to controversies | Might obtain unsatisfying results which could not identify the author |

Project management

Process Structure, Task Allocation and Schedule

Milestones and Timeline

In this project, there are several numbers of milestones from the proposal seminar towards the end that consist of a seminar, final report and project exhibition. In addition to these required deliverables to the school, additional internal milestones have been added by the team members. Furthermore, each team members reports their own findings on the online wiki and meeting will be held to discuss issues based on their reports. This will be done twice per week to ensure that the project progress smoothly and also to make certain that the project will be completed within the deadline. A summary of the deliverables which will be all delivered as a team is summarized below in a table.

| Events | Date | Action By |

|---|---|---|

| Proposal Seminar | 11th August 2010 | Jie Dong, Leng Tan, Tien-en Phua |

| Stage 1 Design Document | 23rd August 2010 | Jie Dong, Leng Tan, Tien-en Phua |

| Peer Review of Stage 1 Design | 30th August 2010 | Jie Dong, Leng Tan, Tien-en Phua |

| Progress Report | 4th September 2010 | Jie Dong, Leng Tan, Tien-en Phua |

| Interim Performance | 11th September 2010 | Jie Dong, Leng Tan, Tien-en Phua |

| Final Seminar | 2nd May 2011 | Jie Dong, Leng Tan, Tien-en Phua |

| Final Performance | 23rd May 2011 | Jie Dong, Leng Tan, Tien-en Phua |

| Project Exhibition | 3rd June 2011 | Jie Dong, Leng Tan, Tien-en Phua |

All of the deliverables above are required to be completed by the contribution from all team members. However, the workload for this project was divided to the team member base on the Work Breakdown Structure (Table 3).

| WBS ID | Description | Responsible |

|---|---|---|

| 1. | Text Authorship | Jie Dong, Leng Tan, Tien-en Phua |

| 1.1 | Research on project and controversies | Jie Dong, Leng Tan, Tien-en Phua |

| 1.2 | Proposal Seminar | Jie Dong, Leng Tan, Tien-en Phua |

| 1.3 | Research Methods | Jie Dong, Leng Tan, Tien-en Phua |

| 1.3.1 | Function Word Frequency Analysis | Tien-en Phua |

| 1.3.2 | Word Recurrence Interval | Leng Tan |

| 1.3.3 | Trigram Markov Chain | Jie Dong |

| 1.4 | Stage 1 design document | Jie Dong, Leng Tan, Tien-en Phua |

| 1.5 | Peer Review of Stage 1 Design | Jie Dong, Leng Tan, Tien-en Phua |

| 1.6 | Software Architecture Design | Jie Dong, Leng Tan, Tien-en Phua |

| 1.7 | Development of Algorithm | Jie Dong, Leng Tan, Tien-en Phua |

| 1.7.1 | Function Word Frequency Analysis | Tien-en Phua |

| 1.7.2 | Word recurrence Interval | Leng Tan |

| 1.7.3 | Trigram Markov Chain | Jie Dong |

| 1.8 | Progress Report | Jie Dong, Leng Tan, Tien-en Phua |

| 1.9 | Test and Evaluation | Jie Dong, Leng Tan, Tien-en Phua |

| 1.9.1 | Function Word Frequency Analysis | Tien-en Phua |

| 1.9.2 | Word recurrence Interval | Leng Tan |

| 1.9.3 | Trigram Markov Chain | Jie Dong |

| 1.10 | SVM Implementation | Jie Dong, Leng Tan, Tien-en Phua |

| 1.10.1 | Code development | Jie Dong, Leng Tan, Tien-en Phua |

| 1.10.2 | Testing of develop code | Jie Dong, Leng Tan, Tien-en Phua |

| 1.11 | Interim Performance Report | Jie Dong, Leng Tan, Tien-en Phua |

| 1.12 | Software Modification | Jie Dong, Leng Tan, Tien-en Phua |

| 1.13 | Analysis of Results | Jie Dong, Leng Tan, Tien-en Phua |

| 1.14 | Comparison of Results | Jie Dong, Leng Tan, Tien-en Phua |

| 1.15 | Implementation on controversies | Jie Dong, Leng Tan, Tien-en Phua |

| 1.16 | Final seminar | Jie Dong, Leng Tan, Tien-en Phua |

| 1.17 | Final Report | Jie Dong, Leng Tan, Tien-en Phua |

| 1.18 | Final performance | Jie Dong, Leng Tan, Tien-en Phua |

| 1.19 | Final project exhibition | Jie Dong, Leng Tan, Tien-en Phua |

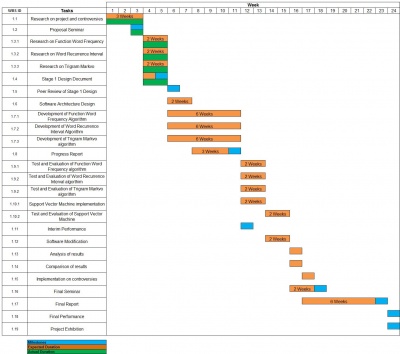

Looking at the work breakdown structure, each team member is responsible for 1 of the data extraction technique. This provides us the flexibility to undergo 3 tasks in parallel, maximizing time and work efficiency yet minimizing time wastage. Furthermore, a Gantt chart (see figure 5, click to view the full resolution) was produced including the additional internal milestone that was decided by the team to monitor project progress.

Risk management

The team have evaluated on the different risks involved in this project. For further simplification, the team have categorise 2 types of risks which are the Technical Risk Analysis and the Occupational Health and Safety. The team members are advised to fully comprehend the risks that may occur in the project and undergo preventive measures to avoid the problem from occurring.

| Risk | Preventive Measures /10 | Probability Rating /10 | Impact Rating /10 | Priority Score /100 |

|---|---|---|---|---|

| Developing the wrong software functions | Proper design before writing the code | 10 | 9 | 90 |

| Behind schedule | Regularly monitor the project schedule | 7 | 9 | 63 |

| Team members unclear of the task given | Brief discussion is held every time before undergoing the project in the laboratory | 6 | 8 | 48 |

| Unfamiliar with the programming language | Study and understand the basic of the programming language before undertaking software development. | 5 | 6 | 30 |

| Unstable resource such as computer and server crashing. | Regularly save the files | 3 | 5 | 15 |

| Risk | Preventive Measures /10 | Probability Rating /10 | Impact Rating /10 | Priority Score /100 |

|---|---|---|---|---|

| Suffer from back and neck injury due to sitting in bad posture | Ensure that position is in a upright position and obtain a comfortable chair | 10 | 6 | 60 |

| Develop hand and leg soreness due to lack of rest | Regularly stand up and walk around to exercise the limbs | 5 | 4 | 20 |

| Inadequate sleep resulting headache or migraine | Rest when required | 6 | 5 | 30 |

| Suffer from depression and anxiety due to incapability of solving program code | Seek for help if mental road block occurs | 9 | 4 | 36 |

| Strain on visual optics due to staring too long on the computer screen | Look away from monitor every 5 minutes after working for 30 minutes | 3 | 10 | 30 |

Budget

An amount of two hundred and fifty dollars was allocated to each student for this project, resulting in a total budget of seven hundred and fifty dollars.

Total allocated budget - $750

Expenses

- Printing of research documents - $200

- Purchase of resources - $200

- Additional resources - $100

Total Expected Expenses - $500

Printing of research documents would consist of past research done by various institutions, for the project team to analyze and evaluate the research that has been carried out up to date.

Purchase of resources would include books that have been written in regards to the author of the letter to the Hebrews. Additional resources such as online books would be purchased to use as our training and testing data to measure the accuracy of our classification model.

Additional resources include purchase of storage devices such as compact disc, to store data, software programs, handbook and reports.

PDF Version

File:Stage 1 Design Document.pdf

References

Reference [1] Eddy H. T., The Characteristic Curves of Composition, <http://www.jstor.org/stable/1763509>, viewed August 2010

[2] Smith, M. W. A., Recent experience and new developments of methods for the determination of authorship, ALLC Bulletin, 11:73–82, 1983.

[3] Hilton, J. L., On Verifying Wordprint Studies: Book of Mormon Authorship, BYU Studies, vol. 30, 1990.

[4] Holmes, D. I., & Forsyth, R. S., The 'Federalist' Revisited: New Directions in Authorship Attribution, Literary and Linguistic Computing, 10, 111-127, 1995.

[5] Stamatatos, E., Fakotakis, N. & Kokkinakis, G., Automatic Text Categorization in Terms of Genre and Author, Computational Linguistics, vol. 26, no. 4, pp. 471-495(25), December 2000

[6] Baayen, H., Halteren, H. V., Neijt, A. & Tweedie, F., An experiment in authorship attribution, 6th JADT, 2002

[7] Juola, P. & Baayen, H., A controlled corpus experiment in authorship attribution by crossentropy, Proceedings of ACH/ALLC- 2003, 2003.

[8] Sabordo,M., Shong, C. Y., Berryman, M. J. & Abbott, D., Who Wrote the Letter to the Hebrews? – Data Mining for Detection of Text Authorship, SPIE vol. 5649 pp. 513 – 524, 2004.

[9] Putnins, T. J., Signoriello, D. J., Jain, S. Berryman, M. J., & Abbott, D., Who wrote the Letter to the Hebrews? Data mining for detection of text authorship, University of Adelaide, 2005

[10] Anderson, P. C, The Epistle to the Hebrews and The Pauline Letter Collection¸Harvard Theological Review, Vol. 59, No. 4 (Oct., 1966), pp. 429-438

[11] Zhao, Y, Zobel, J, Effective and Scalable Authorship Attribution Using Function Words, RMIT University

See also

- Authorship detection: 2010 group

- Authorship detection: Who wrote the Letter to the Hebrews?

- Minutes of Meeting 2010: Who wrote the Letter to the Hebrews?

- Critical design review 2010: Who wrote the Letter to the Hebrews?

- Progress Report 2010: Who wrote the Letter to the Hebrews?

- Final report 2010: Who wrote the Letter to the Hebrews?

- Youtube Video Presentation 2010: Who wrote the Letter to the Hebrews?