Final report 2010: Who wrote the Letter to the Hebrews?

Acknowledgement

The team wishes to extend our thanks to Professor Derek Abbott and Dr Brain Ng thank for their support and guidance throughout the course of this project. In addition, the team would also like to extend our thanks to Dr Matthew Berryman for his input and guidance in this project. The project could not have progress well without the support and assistance from them.

Executive Summary

The New Testaments of the Bible contains a number of text that have disputed or unknown authorship. One of the texts that has been widely debated would be the letter to Hebrews. A number of authorship attribution methods have been developed and refined over the years and this project aims to implement these methods to address the issue of who wrote the letter to Hebrews. Three methods had been selected, researched and enhanced for authorship attribution. The effectiveness of these methods are then analysed and applied to the letter to Hebrews. The three methods are, Function Word Analysis, Word Recurrence Interval and Trigram Markov. In addition, the Support Vector Machine will be implemented in this project to develop a classification model that uses the feature vector that is extract from a given text. The results obtained from these methods would shine light onto the possible authors and eliminate other suspects. This information will be essential in identifying the rightful author of the letter to Hebrews.

Introduction

Project Objectives

This project aims to provide a non-biased approach using authorship attribution algorithms to analysis the letter to the Hebrews. In doing so, the project team will be looking at the results obtained and possibility identify the likely authors to the letter of Hebrews and eliminate other authors from the list of possible authors. The project will further enhance three feature extraction algorithms in order to identify the author of the letter. The three algorithms are frequency of occurrence of function words, Word Recurrence Interval and Trigram Markov Model. In addition, the Support Vector Machine will be implemented to develop a classification model. The Support Vector Machine had been demonstrated to be accurate in classification (as discussed in section 4) and would be able to contribute significant evidence regarding the author of the letter to Hebrews. This project also aims to implement the usage of authorship attribution algorithms with other languages other than English.

Project Approach

There are a number of algorithms and techniques that had been used in authorship attribution. Some of the techniques include analysing the other works of the author and comparing it with the disputed text to see if the writings of the disputed text is similar to that of his other works. Another technique include analysing the author's character and knowledge of the subject matter and analyse if the disputed text could be attributed to the author based on his knowledge and understanding. However, these approaches often produce a result that scholar view as biased and often this result ends up being debated. In this report, the team would like to focus on a non-biased approach, by analysing and observing key features in a text that would be able to distinguish an author from another author. These algorithms have been proven in the past to be effective markers in identifying the rightful author of a disputed text (see section 1.4). A number of algorithms exist such as, Word Recurrence Interval, Kolmogorov-Smirnov test, Trigram Markov model, Gutman (LZ preprocessing) method and frequency occurrence of function words. However, this project will focus on three algorithms to provide evidence on who wrote the letter to the Hebrews. The chosen algorithms are frequency occurrence of function words, Word Recurrence Interval and Trigram Markov.

Structure of This Report

In this report, the background of the letter of Hebrews will be briefly discussed followed by a brief overview of the Bible, namely, the New Testaments. The aims of this project and the approach taken are provided in section 1.1 and 1.2 respectively. A short discussion on past studies conducted in the field of authorship attribution is provided in section 1.4. The significant of the project and the data set that the algorithm will be using to analyse the effectiveness of the algorithm are provided in section 1.5 and 1.6. Thereafter, this report will present the task of processing the text into a suitable form before specific features will be extracted and presented to the Support Vector Machine, called feature vectors, to develop a classification model. A discussion on the Support Vector Machine is present to readers who would like to know more about it in section 4. The results from the three algorithms are presented in their respectively section and are ordered in the following manner - Function Word Analysis, Word Recurrence Interval and Trigram Markov method. The data used in the analysis are the English text, the Federalist papers, the translated version of the New Testaments and the Koine Greek text of the New Testaments. Therefore, the project management aspect of this project will be presented and in the last section, a conclusion is presented.

Previous Studies

In the past centuries, several researchers have spent hard work on authorship detection resulting in a vast of number and diversity. The earliest study in this subject was conducted by Mr. Mendenhall [4] who uses characteristic curve of composition. However it was heavily criticised by Florence in 1904 that the technique applied was principally controlled by the language in which the text is written. It was stated out by Florence that there were only a very small difference in the characteristic curves between various English writers; hence it is presumable that using completely different authors yet using the same language will produce approximately the same characteristic curves for each author. This arrives to a conclusion that characteristic curves have inquiries that are too narrow to properly distinguish the author's style of writing.

In 1964, Mostella and Wallace published a book titled, 'Inference and Disputed Authorship: the Federalist', providing statistical evidence which led to the conclusion on who wrote the twelve disputed papers of the Federalist. The Federalist papers were written in 1787-1788 by Alexander Hamilton, James Madison and John Jay. The authors of these 85 papers then were unknown and were signed off as "Publius". In 1807, a Philadelphia periodical received a list that is said to have been left by Hamilton prior to his death in 1804, and assigned the various papers written to their authors, namely Alexander Hamilton, James Madison and John Jay. However, in 1818, James Madison made a claim saying that he wrote twelve of the Federalist papers that Hamilton had ascribed to himself. In a quest to clear the disputed on the Federalist papers, Mostella and Wallace examined the use of 'marker words' that were used with very different frequencies by the two authors, Hamilton and Madison. In total, 70 function words were applied and the results were presented. The twelve disputed paper were then attributed to James Madison.

In the year of 1983, Smith [5] conducted researches in an attempt to obtain evidence by using word and sentence length as discriminators for authorship attribution. He uses the chi-square measure as the method of detection for the measurements in the research. However the problems that occur during the interpretation of the chi-square measure leads him to a conclusion that authorship attribution base on word count and sentence length were not feasible, consequentially leading into having incorrect results.

In the year of 1990, Hilton [12] uses the word pattern ratios on 'The Book of Mormon' such as the number of times 'a' appears as the first word of sentence divided by the number of sentence in a paragraph. It was arrive to his attention that both non-contextual word frequencies and word-pattern ratios have relatively good differentiating power, rising to the suggestion that it would be a good first choice in authorship attribution. However it was also mentioned by Hilton that vocabulary richness measurements is not a good method for differentiating texts. It does provide considerable information but have several limitations on detecting differences and similarities in the texts. As a conclusion, Hilton's results highlighted the fact that simple feature extraction such as word frequency occurrence would be a good choice.

In the year 1995, Holmes and Forsyth [15] use Principal Component Analysis (PCA) and Linear Discriminant Analysis (LDA) for the classification and vocabulary richness and word frequency analysis for data preparation. The main idea was the transformation of the observed variables to a new set of variables which are not correlated. This is done with the intension to reduce the dimensionality of the problem and also an attempt to find new information in the variables for easier interpretation. These components are the linear combinations of the observed variables hence it was anticipated that the first few components would have most of the variation of the original texts. Base on their results, it was shown that using vocabulary richness as the feature extraction and LDA as the data classification gives excellent results, suggesting that vocabulary richness variables provide a good set of discriminators for authorship attribution. Another conclusion was that it was also advisable to use large sets of common words (such as >=50) if the technique word frequency analysis is used to obtain meaningful results which would concur with Burrows [16] research.

In the year of 2001, Stamatatos [8] et al. made a new method that is to use text categorization using genres or authors using Natural Language Processing (NLP). They reached to a conclusion that the method achieved relatively high classification accuracy. They attempted to take advantage of existing NLP tools by using analysis-level style markers that provide useful stylistic information. With success, their method has outperformed existing lexically based methods in authorship attribution at that time. The results that were achieved shows that using stylistic differences for text genre detection is a much better choice than using it for authorship attribution as stylistic differences are clearer among text genres, rather than authorship attribution.

In the year of 2002, Baayen [18] conducted an experiment on student's essays by using the most frequent function words as the feature extraction and PCA and LDA as the data classification technique. Subsequently he arrived to a conclusion that LDA is a more appropriate technique to use than PCA for authorship attribution. This is because the simplicity of using PCA with the highest frequency function words fails to detect the authorial structure of the texts and leads to insightful clustering. In addition, it was also found that with the simple inclusion of punctuation marks in the analysis, it actually enhanced the classification accuracy, giving the suggestion that punctuation marks may prove to be an effective style markers, especially for texts that have not been altered or modified by editorial for publications.

In the year 2003, Baayen collaborated with Juola [19] uses a method name as cross-entropy in authorship attribution. The technique was to take a "measurement on unpredictability of a given event with a specific model of events and expectations". In other words, the method can quantify the difference between two data and measure the distance between them. It is argued by Juola that the cross-entropy method perform task more accurately than PCA or LDA techniques as it is highlighted that the method can be effectively applied to shorter texts. Juola claimed that the method could even accurately determine the authorship of a disputed text using less than a page of data. Furthermore, the cross-entropy method could widely be applied to a variety of linguistic and text-analysis problem which suggest that the method of measurement, "distance", is the exact sense as numerical measure that can be compared with other scalar measurements from different types of document.

In the year 2004, Sabordo [20] uses the data compression technique and also the Word Recurrence Interval (WRI) to "the Letter of Hebrew" in the New Testament. It was concluded by Sabordo that the Prediction by Partial Matching (PPM) compression and the GZip compression technique was not effective in analysing the similarities or differences in the pattern or relationship between texts. More specifically, the GZip and PPM compression technique produce graphical results that have a poor discrimination ability due to overlapping standard deviations. However, the WRI algorithm proved to be useful and successful as the technique could identify similarities in styles of texts written by the same author.

In the year 2005, Putnins et al. [21] used function word frequency and Trigram Markov model with Multiple Discriminant Analysis (MDA) and Word Recurrence Interval. Based on the results, he showed that the function word frequency and Trigram Markov Model gives a relatively high accuracy results in authorship attribution thus has the ability to provide statistical evidence to authorship attribution problems. However it was shown by him that the WRI does have poor performance in authorship attribution.

Motivation

Since the invention of internet in the 1960s, it has been developed at such a rapid rate that it has changed people's lifestyle throughout the world. An increasing amount of economic and intellectual activities involve global internet as a medium and the scrutiny of the authenticity of documents have become more challenging. In addition, the data we have is often vast and noisy, implying that it might be imprecise and the data structure is complex. A purely statistical technique would not produce satisfactory result, therefore data mining is developed. It is a technique that filters out noise data and extracts useful and relevant information hidden within large volumes of data.

Data mining technique can perform well in a wide range of applications. Three major fields of applications include plagiarism analysis, authorship identification and near-duplicate detection. In plagiarism analysis, the technique is able to determine the similarities between objects, such as source codes, articles, music and hence provides statistical plagiarism evidence. Authorship identification is another important application whereby many existing controversies such as Shakespeare authorship in question and The Letter to Hebrews may be unravelled. The motivation of this project is to provide information pertaining to the author of the letter to Hebrews.

Corpus

For the purpose of evaluating the accuracy of the algorithm and to identify a set of conditions that would be optimal in authorship attribution, a set of corpus was obtained from the Project Gutenberg archives. Four sets of data were used for this purpose, namely, English Text, The Federalist Paper, King James Version of the Bible, and Koine Greek of the New Testaments.

The English Text was used to develop and evaluate the effectiveness of the algorithm. These English texts were chosen from the Project Gutenberg archives and provided many texts by various authors. In this project, a set of twenty-six texts from each author was obtained from Project Gutenberg. Four texts from each author was set aside and labelled as disputed text while the remaining twenty-two text would help develop the classification model of the Support Vector Machine. In total, there will be a hundred and thirty-two training text and twenty-four disputed text.

The Federalist paper comprises of eighty-five papers that were written by Alexander Hamilton, James Madison and John Jay. Out of the eighty-five papers of the Federalist, fifty-one of the papers were written by Hamilton, fourteen of the papers were written by Madison, five of the papers were written by John Jay, three of the papers were a collaboration between Hamilton and Madison and twelve papers, No 49-58 and No 62-63, were disputed. For the purpose of an accurate classification model, the three papers that were collaborated between Hamilton and Madison were removed. The Federalist paper provides a idealize model for the New Testaments as shown in Table 1 that most of the books of the New Testament were written by Paul which is similar to the Federalist paper where most of the papers were written by Alexandra Hamilton.

The King James Version of the Bible is an English translation of the original text, Hebrews for the Old Testaments and Koine Greek for the New Testaments, which started in 1604 and completed in 1611. The translation was the work of 47 scholars from the Church of England. However, translation itself might affect the accuracy in authorship attribution as word for word translation was occasionally impossible due to the complexity of languages such as the difference in sentence structure and grammar. The New Testament was originally written in Koine Greek. In analysing the New Testament in its original form, Koine Greek, this preserves the original fingerprint of the author and removes the fingerprint from the translators. This approach provides a more accurate classification model in analysing the letter to the Hebrews.

Background

Background of the Letter to Hebrews

According to the Catholic Encyclopedia, the letter to the Hebrews, or also known as the epistle to the Hebrews, is said to have been written in the late 63 AD or early 64 AD. Traditional scholars have said that the letter was written to the Jews at that time. The author of the letter to Hebrews has been wavering since the time of Origen (185 - 256AD). Numerous authorship techniques had been applied to the text but results had often been inconclusive or had only been able to show that it is most likely that Paul of Tarsus also known as Apostle Paul was not the author of Hebrews.

In the 4th century, Jerome and Augustine of Hippo supported Paul's authorship and the letter to the Hebrews was identified as the fourteenth letter by Paul (Fonck, 1910). However, Milligan George commented that it was unlikely that Paul was the author of Hebrews because of the anonymity of the letter was not consistent with Paul's pattern and the style of writing is also different from that of Paul. Conversely, Clement of Alexandria claimed that Paul did not indicate his name because the Hebrews had conceived a prejudice against Paul. Furthermore, Clement of Alexandria commented that Paul was likely the author of Hebrews originally and not the Greek version. The latter is the work of Luke who translated Paul's letter.

A list of potential authors had been generated over the year by Biblical scholars. Some of the criteria are for example, the author's knowledge on the subject in the letter of the Hebrews. Barnabas was as a potential author of the letter as he was from the tribe of Levites and because the major theme in the letter to the Hebrews focus on the Levitical law and the priesthood. The Levities are often recognise as the choose tribe for priesthood. Some others claim that Pricilla and Aquilla might have been the rightful authors as in the letter to the Hebrews a reference was made in regards to a person called Timothy who Priscilla and Aquilla knew. Paul was the widely debated author to this letter as the writing in this letter was similar to that of his other works. Clement of Rome was selected as one of the authors due to his close association to Paul, that is if Paul did not write the letter, it was likely that Clement did. Peter was listed down as potential author as well by some Biblical scholars as he was seem as one of the leaders of the Church at that time and his close association to the Jews or Hebrews.

Background of the Bible

The Bible itself consists of 66 books that were written by various authors. It is divided into two sections, namely the Old Testaments and New Testaments. The Old Testaments itself consist of 39 books and the New Testaments with 27 books. The various authors of the 27 books of the New Testaments are shown in Table 1. In addition, most of the books are said to have been written in Koine Greek.

| Texts | Authors |

|---|---|

| The Gospel of Matthew | Matthew |

| The Gospel of Mark | Mark |

| The Gospel of Luke | Luke |

| The Gospel of John | John |

| The Acts of the Apostles | Luke |

| The General Epistle of James | James |

| The First Epistle of Peter | Peter |

| The Second Epistle of Peter | Peter |

| The First Epistle of John | John (might be disputed) |

| The Second Epistle of John | John (might be disputed) |

| The Third Epistle of John | John (might be disputed) |

| The General Epistle of Jude | Jude |

| The Book of Revelation | John (might be disputed) |

| The Epistle to the Romans | Paul |

| The First Epistle to the Corinthians | Paul |

| The Second Epistle to the Corinthians | Paul |

| The Epistle to the Galatians | Paul |

| The Epistle to the Philippians | Paul |

| The Epistle to Philemon | Paul |

| The Epistle to Titus | Paul (might be disputed) |

| The First Epistle to Timothy | Paul (might be disputed) |

| The Second Epistle of Paul to Timothy | Paul (might be disputed) |

| The First Epistle to the Thessalonians | Paul |

| The Second Epistle to the Thessalonians | Paul (might be disputed) |

| The Epistle to the Ephesians | Paul (might be disputed) |

| The Epistle to the Colossians | Paul (might be disputed) |

| The Epistle to the Hebrews | Unknown |

There are a number of books in the New Testament that have disputed or unknown authorship. Those whose authors are not widely agreed upon are set aside and not included in the corpus in this project.

Pre-process Text

It is required to pre-process the Greek and English texts before the featured algorithm extraction is done on the texts. This process of filtering is done such that the text will only have vital information that will properly attribute the texts to its corresponding authors. Since one of the project objectives was to approach the authorship attribution statistically, the team have decided to extract out all non-alphabets of the text as it came to a conclusion that these non-alphabet character does not contribute into authorship attribution. The following paragraph will discuss on the different scenarios that were encountered by the team and the solutions that were implemented for pre-processing the text. Comparing with the English texts, it is noted that the Greek texts does not have punctuations hence giving a much more simplicity in pre-processing it.

Text Divider

A significant issue that was detected by the team was that the text length of known texts for each author were different. It is important to approximately let each known texts to have the same text length such that the results for authorship attribution would not be skewed. Due to this reason, the team have developed a program named as "chopper.java" which would separate a text file to a set of text files that approximately have the same text length.

Punctuations

In the pre-process algorithm, the program will automatically read-in the text and remove all of the punctuation that occurs in the text. This method will effectively remove the punctuations mark in the sentence.

However upon further research, it was found out by the team that not all punctuation marks should be removed via the method that was implemented. The table below shows the different exception punctuation marks that were needed to be handled differently.

| Punctuation Mark | Method to Resolve | Example |

|---|---|---|

| Apostrophes Hyphens | Remove the apostrophe or hyphen and fill the empty space with the remaining characters | "Don't" becomes "Dont" "hand-craft" becomes "handcraft" |

| Parentheses Brackets Quotation | Upon encountering the first bracket, scan the word for the second bracket and remove both of them together | "hello world." becomes hello world |

Non-English ASCII Symbols and Control Characters

It was verified and checked that the programming language, Java, supports a substantial amount of different types of encoding \[22\].

Due to this reason, the program will read most of the non-English ASCII symbols and control characters correctly. Upon encountering these characters, the program will remove it from the text as the Greek and English text should only consist of alphabets. Hence it was not required to implement any exceptions handler for this as the programming language Java is competent enough.

Carriage Returns and Line-feeds

The pre-process algorithm will scan the text and remove carriage returns and line-feeds. The algorithm will read each word in the text, convert it to lowercase and store it into a long sentence. Upon completion of the filtering of the text files, the algorithm will subsequently print the sentence into a newly created text file named as "modified_filename.txt". These modified text file would then be the output of the pre-process algorithm and be used as the input for the feature extraction algorithms.

Koine Greek

The New Testament text was written in Koine Greek. In order to be able to process the Koine Greek, it is necessary to map the language into a form that is able to be process by Java. Figure 1 shows an example of how the Koine Greek looks like. In the left column, it is shown the Greek letter both in capital and in lower caps. As noted that in this form, Java was not able to recognise the format in which the Koine Greek text was encoded in.

In the right column of Figure 1, it shows the mapping of Greek to its equivalent, which is also known as beta code. Java was able to process the Greek equivalent and processing it simply as an ASCII character.

Support Vector Machine

Background of SVM

As mentioned in the previous section, The Support Vector Machine is the classification model that is used in this project. It is a new classification technique which was first invented by Vladimir Vapnik and the current standard incarnation was proposed by Vapnik and Corinna Cortes in 1995. When this classification algorithm first came out, it was used in the field of bioinformatics such as DNA sequence analysis. Now it has became a very promising tool for machine learning and data classification.

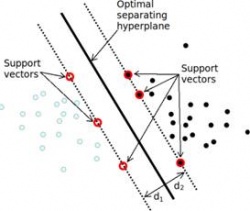

Since SVM is a classifier, given a set of training data with each marked as belonging to one of two categories, an SVM training algorithm builds up a model that assigns new testing data into one of these two groups. Intuitively, these data can be regarded as points locating randomly in the space, what SVM does is to look for support vectors (data points with red circles in Figure 2) to form a linear boundary on each group. The gap between two boundaries is referred to as margin, and SVM tries to find the optimal separating hyper plane that maximises the margin. This is the reason that the Support Vector Machines are also called maximum margin classifier. When a new testing point comes into the system, it is assigned to one of the two groups based on which side of the gap it falls on.

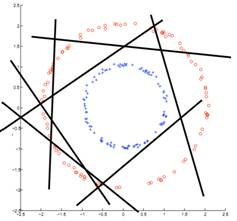

If these data are distributed like the way shown in Figure 2, then the data are called linearly separable and it is easy for a straight line to be fitted between these two categories. However, in practical when data points are not so perfectly distributed, in other words non-linear separable such as situation in Figure 3, finding a linear gap could be extremely difficult.

In order to solve this problem, it is essential to map the non-linear separable cases into linear separable ones before applying SVM. The choice of mapping function is fairly important, and inappropriate choice of mapping function may lead to a situation in which data is hardly separable. An example of an efficient mapping function is shown in Figure 4. Consider a set of N input data where each point in the set has 2 coordinate values (x1, x2). The positive samples of this set are inside a circular region while negative samples are outside. Apparently, this problem is not linearly separable. However, after each data point is expanded using function f below which is the function defining mapping operation from 2-dimensional space to a 3-dimensional space,

These transformed data points can be linearly separated by a plane as shown in Figure 4. When new testing data is fed into the system, the data are mapped using the same transform operation and the group it categorised to is predicted by observing which side of the plane it locates in.

|

|

Classification Kernel Functions

From section 4.1, we have seen how significantly important that the choice of the mapping function will influence SVMs performance. However, a kernel function is used to implicitly define a mapping function. Past research have shown that by computing the inner product in the higher dimensional space defined by the mapping function, then it is possible to implicitly define the non-linear warping effect of f. Using the same example as in Figure 4, observe the equivalence of the following two ways of computing the inner product of the mapping results:

Method 1 - Explicit Mapping

Method 2 - Implicit Mapping

In explicit mapping, data were first mapped before performing the inner product. Whereas in implicit mapping, the inner product operation was performed first in the original space and the result is squared. Hence kernel functions allow us to evaluate the inner product in higher dimensional feature space defined by f without having to explicitly compute the mapping function f.

There are many types of kernel functions where each of them defines a different mapping. Below is a list of some kernel functions available in Matlab bioinformatics toolbox, and it will be used as choices of kernel function for SVM classification model. One can also define their own kernel function for some purposes.

Linear

The linear kernel is the simplest kernel function which takes the inner product of feature vectors in their original feature space and a optional constant c. Kernel algorithms using a linear kernel are often equivalent to their non-kernel counterparts.

Gaussian Radial Basis Function (RBF)

A common choice of positive radial basis function (RBF) kernel in machine learning is the Gaussian kernel. The geometry of RBF kernels states that the transformed points f(p) in the feature space induced by a positive semi-definite RBF kernel are equidistant to the origin and thus all lie on a hypersphere around the origin.

Quadratic

Quadratic kernel function is a specific type of polynomial kernel function where d = 2. The example shown in section 4.2 is the quadratic kernel in two dimensions. Therefore, it has the form as equation shows below.

Polynomial

The polynomial kernel is a non-stationary kernel. Polynomial kernels are widely used and well suited for problems where all the training is normalized. It involves adjustable slope a, the constant term c and polynomial degree d.

Operation of SVM

The implementation of Support Vector Machine classifier in our project will be based on Matlab, since it has already been integrated with several built-in functions for SVM in bioinformatics toolbox. Two functions which will be used are svmtrain and svmclassify.

In most cases we would like to evaluate the predictor by how well it predicts the label for new data. This implies, however, that we would have to deploy the system first before evaluating it. Ideally it would be better to evaluate before deployment. This is achieved by the following steps:

- Collect a large set of training data

- Randomly divide it into two non-overlapping disjoint subsets: the training set and the testing set

- Applying SVM on training data, producing a classifier

- Measure the percentage of samples in the testing set that are correctly labelled by classifier

- Repeat steps above for different sizes of training sets

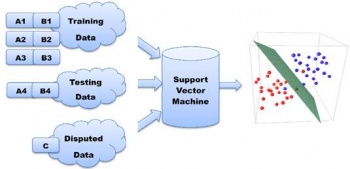

Figure 5 demonstrates the construction process of the SVM model and how the efficiency is verified in the project. The aim of the project is to determine authorship of "the letter to Hebrews". In this case, the Letter to Hebrews is going to be dispute text C in Figure 5. The other groups, A and B, represent some other texts in New Testament which we already know their author. Texts in the same groups are proved to be written by one person. Firstly, part of texts from group A and B are labelled as A1 and B1 and chosen to train the model. Then in order to find out how accurate the model is, we pretend rest of texts from A and B are dispute. The predict results will then be compared with their real authors to calculate our model's accuracy.

While the confidence interval of the model reaches certain level, we apply the SVM to C, which is "the Letter to Hebrews", and find out who the most possible author is.

Multigroup Classification and Ranking System

Although SVM is an efficient tool for classification, it is designed to only classify between two groups. However, the data set as described previously consists of texts written by more than two authors. In order to ensure SVM keeps performing well in this situation, it is essential to develop algorithms to satisfy purpose of multi-group classification. In the project, it is achieved by utilising pairwise classification, by which it means comparing authors in pairs to judge which of each author is preferred.

Suppose a data set containing texts with each written by one of four authors, A, B, C, D and there is also a dispute text which needs to be assigned to one of them. Then SVM needs to perform 6 tests listed as shown in Table 3 and record the predict result for each round.

| Test | Texts by | Texts by | Predict Result |

|---|---|---|---|

| 1 | A | B | A |

| 2 | A | C | C |

| 3 | A | D | D |

| 4 | B | C | C |

| 5 | B | D | D |

| 6 | C | D | C |

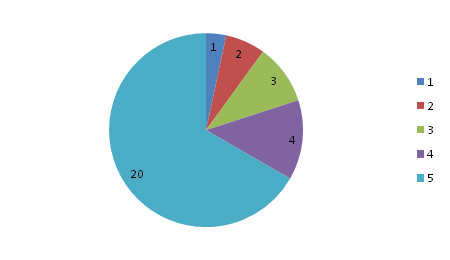

After all tests are executed, C appears the most time among the predict results. As a result, it can be concluded that C is the most likely author of the dispute text while B is the most unlikely author. If represented by a rank number, then author C has a rank of 1, while B has a rank number of 4. A plot of the ranking system is shown in Figure 6. The way rank system is used and interpreted will be further explained in later chapter.

Format Input and Output

As previously mentioned, feature extraction algorithm produces feature vectors with the same dimensions for both training texts and dispute texts. These vectors should be organised in a way that SVM classifier can understand and use for prediction. Therefore, it is crucial to set a standard format for the data file which is produced by extraction algorithm and used as input for SVM.

Structure of SVM input file is shown in Figure 7. This file is generated by Java, in which contains two major parts:

- Three header lines:

- Number of texts (including both dispute texts and training texts)

- Number of disputed texts

- Vector dimensions:

- For function words algorithm, it indicates the number of function words used

- For Word Recurrence Interval( WRI ), it is the number of key words used to find their word recurrence interval

- For Trigram Markov model, it is the number of trigrams (same set of trigrams for all) used to characterise each text

- Data Matrix

- In this matrix, every row indicates features from one text. It starts with a String of text with author's name or "unknown" and then is followed by some numbers (different meaning for different methods). This matrix can be divided into two parts:

- Upper Matrix is for all the known author texts

- Lower Matrix is for all disputed texts

- In this matrix, every row indicates features from one text. It starts with a String of text with author's name or "unknown" and then is followed by some numbers (different meaning for different methods). This matrix can be divided into two parts:

With this SVM input, classification procedure is listed as below:

- According to header lines, separate known author texts and disputed texts. The upper matrix forms a set of entries used for SVM training and lower part is used as testing data. The Matlab program converts all author strings into numerical label. Texts with same author will have identical numerical label.

- Create a SVM object with a kernel function (linear, quadratic, Gaussian Radial Basis Function, polynomial)

- Feed each row in training matrix into SVM using svmtrain() method to learn each author's characteristics.

- After all train entries are fed into SVM object, it is ready for classification

- Predict each test entries individually and compare the predict author with actual author and compute the accuracy

Function Words

Texts are made up from a combination of both content and function words. Function words (or grammatical words or auto semantic words) are words that have vague meaning and are used to express grammatical relationships with other words within a sentence. Function words can be prepositions, pronouns, determiners, conjunctions, auxiliary verbs or particles as shown in Table 4. Each function word either gives some grammatical information on other words in a sentence or clause, and cannot be isolated from other words, or it may indicate the speaker's mental model as to what is being said \[1\] .

| Function Words | Examples |

|---|---|

| Prepositions | of, at, in, without, between |

| Pronouns | he, they, anybody, it, one |

| Determiners | the, a, that, my, more, much, either, neither |

| Conjunctions | and, that, when, while, although, or |

| Modal verbs | can, must, will, should, ought, need, used |

| Auxiliary verbs | be (is, am, are), have, got, do |

| Particles | no, not, nor, as |

In contrast, content words are highly correlated with the document topics and might not be suitable for authorship attribution as two authors writing on the same topic or about the same event may use many similar words and phrases \[3\]. Examples of content words are shown in Table 5.

| Content Words | Examples |

|---|---|

| Nouns | John, room, answer, Selby |

| Adjectives | happy, new, large, grey |

| Full verbs | search, grow, hold, have |

| Adverbs | really, completely, very, also, enough |

| Numerals | one, thousand, first |

| Interjections | eh, ugh, phew, well |

| Yes/No answers | yes, no (as answers) |

In authorship attribution, the usage of function words is appealing as it forms the writing style of an author. The incidence of function words is contributed to authorial style and is not affected by the content of the text \[3\]. The frequency of occurrence of a particular function word would differ from author to author and the choice of function words used in developing a text also differs. Hence each author would have a unique set of function words that appears a certain number of times.

In 2005, a student from the University of Adelaide identified thirty frequency occurring words and counted the appearance of these words in the different text by different author in the New Testaments and applied it to attribute the author of the letter to the Hebrews.

| and | the | of | to | they | that |

| he | in | him | unto | them | a |

| was | with | when | i | paul | which |

| for | all | had | were | god | said |

| his | we | this | from | but | not |

In addition, Mosteller and Wallace \[2\] identified about 70 function words and applied them in the analysis of the Federalist Papers and produced conclusive results that attributed the twelve disputed text to James Madison.

| a | do | is | or | this | all | down | it | our | to |

| also | even | its | shall | up | an | every | may | should | upon |

| and | for | more | so | was | any | from | must | some | were |

| are | had | my | such | what | as | has | no | than | when |

| at | have | not | that | which | be | her | now | the | who |

| been | his | of | their | will | but | if | on | then | with |

| by | in | one | there | would | can | intro | only | things | your |

Automated Function Words

The challenge with Function Word Analysis is selecting a set of function words that is unique and able to attribute a disputed text to its rightful author. The choice of a set of function words is the key to an accurate classification of a disputed text. It is necessary to obtain a set of features from a set of text that would be able to distinguish one author from another. In the Federalist papers, Mosteller and Wallace notice that the number of occurrence of certain words that Hamilton used were different from that of Madison as shown in Table 8. It is observed the usage of the words "while" and "whilst" were particularly unique in these two authors.

| Rates per Thousand Words | ||||

|---|---|---|---|---|

| On | Upon | While | Whilst | |

| Hamilton | 3.28 | 3.35 | 0.28 | 0.00 |

| Madison | 7.83 | 0.14 | 0.02 | 0.48 |

However, one of the aims of this project was to provide a non-biased approach in authorship attribution. This translates to the software, algorithm and the choice of feature vectors developed should not to be skew to any particular author. This led to the development of an automated function word extraction algorithm by identifying the most frequently occurring words and filtering out the function words from the content words by using an algorithm called word sequence interval (WRI). The uniqueness of function words in a text can be express with the frequency of occurrence and the mean of the word sequence interval.

As in section 5.1, the usages of functional words serve to express grammatical relationships with other words within a sentence. Hence, the frequencies of such words are much higher than other words in comparison to content words. As shown in Table 9, in analysing the Gospel of Matthew, the most frequency occurring words are "and", "the" and "of" with the frequency of occurrence shown.

| Functional Words | Frequency of Occurence |

|---|---|

| and | 1552 |

| the | 1405 |

| of | 673 |

Another characteristic of functional words as observed in a text is the word sequence interval between the function words. As shown in Table 10, the WRI of the words, "and", "the", "of" and "Jesus" are shown. The mean of these interval was calculated and an observation was made that the mean value of the functional words such as "and", "the" and "of" was smaller in comparison to the content word or noun, "Jesus" which shows a mean of 138.70 which is about four to nine times larger than the mean obtained from the function words.

| WRI Mean | Word Sequence Interval | |||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| and | 14.28 | 3 | 3 | 2 | 3 | 3 | 3 | 3 | 3 | 3 | 3 | 5 | 5 | 3 | 5 | 3 |

| the | 15.86 | 2 | 4 | 3 | 65 | 3 | 8 | 59 | 61 | 14 | 12 | 9 | 10 | 27 | 34 | 31 |

| of | 34.23 | 2 | 4 | 3 | 21 | 25 | 5 | 17 | 6 | 121 | 1 | 46 | 24 | 37 | 12 | 39 |

| Jesus | 138.70 | 214 | 43 | 110 | 95 | 2 | 894 | 30 | 24 | 54 | 144 | 61 | 38 | 96 | 16 | 2573 |

With this two parameters, the most frequently occurring words and the word sequence interval, a program was develop to identify a set of feature vector that will be used in authorship attribution. Each text is analysed and the top five frequently occurring words are listed. In addition, only words that have an average word recurrence interval of fifty or less are selected. These strict conditions ensure that content words would not be included into the set of feature vector. This feature vector will be labelled as Set A.

In order to provide a benchmark for our set of automatically generated function words, the 70 function words that were used by Mosteller and Wallace in the Federalist papers dispute will be used in the analysis of the effectiveness of the algorithm. This set of function words, or feature vector, will be labelled as Set B. In addition, a list of commonly used function words, 172 function words, will be used and labelled as Set C. The feature vector Set A will be analyse with respect to Set B and Set C and compare the effectiveness and uniqueness of this set of feature vector in authorship attribution. It is noted that Set B and Set C will not be used in the analysis of the Koine Greek text. The reason being that the feature vectors in Set B and Set C are in English and require a translation before being able to be used in the analysis. However, in using a translated set of feature vectors, it would not be able to provide an accuracy result as the imposition of the translator or the effects of translation might cause an inaccurate classification.

Results and Discussion

Results from English Text

In this section, a set of English Texts obtained from the Project Gutenberg archives was used to test and evaluate the effectiveness of the algorithm. A set of twenty-six texts was obtained for each author as discussed in 1.6. The text is then divided into two sets. One set will be used as training data to develop a classification model, and the other set will be labelled as disputed text. The texts that were labelled as disputed texts would help evaluate the accuracy of the algorithm. The number of training data used to develop the classification model was gradually increased from five set of text from each author to ten set of text to fifteen to twenty and to twenty-two.

Figure 8 shows the accuracy of the classification model using the respective number of training data. We can observe that with Set A, using five set of text as training data to develop our classification model, an accuracy of 83.33% was obtained with twenty-four disputed text. This translates to twenty of the disputed text being classified correctly and the remaining four set of disputed text were incorrectly classified. Using Set B, we observed that the accuracy was 91.67% which translate to twenty-two out of the twenty-four disputed text being classified correctly. In observing Set C, a similar set of results with Set B was obtained.

As we gradually increase the number of available training data in developing the classification model, from five set of text from each author to ten, an observation was made that the accuracy of Set A dropped. The accuracy of Set A decrease from 83.33% to 79.17%, where nineteen of the twenty-four disputed texts were correctly classified. An opposite trend was noticed in Set C, where the accuracy increases from 91.67% to 100%, where all the twenty-four disputed texts were correctly classified. As we increase the number of training data further, from a set of ten text to fifteen, it is shown in Figure 8 that the accuracy of the individual set increases. However, with twenty set of text, we notice that the accuracy remains constant or have reach saturation. With the current observation, we proceed to the ranking system in the Support Vector Machine to analyse the text.

As shown in Table 11, the texts that were classified wrongly are highlighted as shown. The first column in Table 11 shows the results from the classification from SVM, in the second column, the ranking system is shown and in the last column shows the rightful author for that text. For example, the first incorrect classified text belongs to the author labelled BB was classified as RD. This incorrect classification is due to the set of feature vectors that were chosen to attribute the text to the author. The set of feature vector, Set A, which consist of 18 function words, is used quite similarly by both authors BB and RD. The portion of these function words that appears in the text that was written by both authors are quite similar. In observing Set B, which consists of 70 function words, the issue of the incorrect classified text was addressed and corrected. The set of feature vector in Set B is about three times of than in Set A, providing a more unique set of feature vector that would be able to distinguish one author from another. In addition, in observing Set C, which consist of 172 function words, the issue of the uniqueness of the set of feature vector was addressed and all the text that was labelled as disputed was correctly classified.

| Classification Results | Ranking (AD,BB,CD,HJ,RD,ZG) | True Result |

|---|---|---|

| AD | 5 2 3 1 3 1 | AD |

| AD | 5 2 2 2 4 0 | AD |

| AD | 5 3 3 2 2 0 | AD |

| AD | 5 0 4 2 3 1 | AD |

| BB | 2 5 1 0 3 4 | BB |

| RD | 1 4 2 0 5 3 | BB |

| BB | 1 5 2 0 3 4 | BB |

| BB | 1 5 2 0 3 4 | BB |

| CD | 4 1 5 2 3 0 | CD |

| CD | 2 4 5 0 1 3 | CD |

| CD | 3 4 5 0 2 1 | CD |

| CD | 4 2 5 3 1 0 | CD |

| HJ | 1 4 3 4 2 1 | HJ |

| HJ | 2 2 0 5 3 3 | HJ |

| HJ | 3 3 3 3 1 2 | HJ |

| HJ | 1 2 2 5 4 1 | HJ |

| RD | 1 4 3 0 5 2 | RD |

| RD | 1 3 1 1 5 4 | RD |

| RD | 4 1 4 2 4 0 | RD |

| RD | 3 4 2 0 5 1 | RD |

| BB | 1 5 2 0 4 3 | ZG |

| ZG | 0 4 1 2 3 5 | ZG |

| ZG | 1 4 0 2 3 5 | ZG |

| ZG | 1 2 1 2 4 5 | ZG |

In a further observation of the ranking system, the difference between the ranks differs only by one. This signifies that although SVM attributed the disputed text to the wrong author, the feature vector, Set A, was able to rule out the unlikely authors for that particular text. For example, in the first incorrect classification, the first likely author is RD, followed by BB and ZG, the top three ranks. By this observation, it is very unlikely that the other author AD, CD and HJ were the rightful authors to this text as these three authors holds the lowest rank in the ranking system. Likewise in the second incorrect classification, that classified a text that is rightfully ZG as BB listed BB, RD and ZG as likely authors while AD, CD and HJ had the lowest rank, which rule out the possibility that AD, CD or HJ could have been the likely author. This principal will be applied in the analysis of the letter to the Hebrews to eliminate unlikely authors from the list of potential authors that was generated over the years.

We notice that with Set C, the peak of the classification model occurs with a set of ten texts from each author and with Set B, the peak occurs with a set of twenty texts and with Set A with twenty-two. This shows that the accuracy of the classification model is determine by two factors, namely, the number of training data and the set of function words used, which is also the number of function words chosen. For a set of feature vectors that is relatively small, a larger set of training data will help to improve the accuracy of the classification model. Likewise, for a set of feature vectors that is relatively large, a small set of training data is sufficient to give an accurate classification results.

As observed in the results presented in this section, the advantage of using function words in authorship attribution is central. Function words are able to distinguish one author from another. In addition, with the implementation of the Support Vector Machine in authorship attribution, the ranking system was able to help identify the likely authors of a given text and eliminate the possibilities of other authors as the rightfully authors. It was also observed that the effectiveness of the feature vector, Set A, was able to attain an accuracy of 83.33% with just five training data from each author. However, the feature vector, Set A, was limited to only 18 function words due to the parameters imposed as discussed in section 5.2. As shown in the results, the feature vector would be more likely to be more accurate in eliminating unlikely authors to a disputed text than attributing a disputed text to it rightfully author unless it is implemented with a large set of training data as observed. Additional results to the English text using training data of five, ten, fifteen, twenty and twenty-two are provided in the Appendix in section 11.6.

Results from the Federalist

In this section, an analysis of the Federalist paper was conducted in view of the effectiveness of the algorithm. The Federalist Paper was obtained from the Project Gutenberg archives and a set of text by each author was set aside for developing the classification model. As the Federalist Paper is similar to the New Testament text, as in, the maximum number of balance training set is limited by the maximum number of text by an author, the results from the Federalist paper would be an essential guide to working with a small corpus.

The approach taken in analysing the Federalist paper would be similar to that in the English text. The number of training data used to develop the classification model will be gradually increased and the results analysed to obtain a set of optimal conditions to be used in the analysis of the New Testaments in terms of authorship attribution.

In the first set of results, using a set of two text from each author to develop the classification model, an accuracy of 52.94% was obtained using the feature vector in Set A and 70.59% accuracy using the feature vector in Set B and 82.35% accuracy using the feature vector in Set C. As the number of training data was increased from a set of two text from each author to three, the accuracy for Set A and Set C increases. However, an observation was made that a drop in accuracy for Set A when the number of training data was increased from a set of three text from each author to four. To further analyse the results obtain, the ranking system is evaluated.

| Classification Results | Ranking (H,J,M) | True Result |

|---|---|---|

| H | 2 0 1 | H |

| H | 2 0 1 | H |

| H | 2 0 1 | H |

| H | 2 0 1 | H |

| H | 2 0 1 | H |

| M | 1 0 2 | M |

| M | 1 0 2 | M |

| M | 1 0 2 | M |

| M | 1 0 2 | M |

| H | 2 0 1 | M |

| M | 1 0 2 | M |

| H | 2 0 1 | M |

| H | 2 0 1 | M |

| H | 2 0 1 | M |

| M | 1 0 2 | M |

| H | 2 0 1 | M |

| M | 1 0 2 | M |

As shown in Table 12, the text that were incorrectly attributed to Hamilton (H) do share similarities with Madison (M) using the feature vector, Set A. Madison was ranked as the second likely author to those text that were incorrectly classified. Although the feature vector of Set A, which consists of only 13 function words was not able to classify the text correctly, it was observed that the feature vector was able to rule out the unlikely possible author, John Jay.

Likewise in the Federalist paper, it was observed that the saturation point of the classification model, for Set B and Set C, occurs when a set of five texts from each author is used in developing the classification model. When the number of training data was increased further, forty-six by Hamilton, fourteen by Madison and five by John Jay, the accuracy for Set B and Set C dropped. The training data was highly skewed to Hamilton with the data by Hamilton being three times more than Madison and nine times more than John Jay. This caused the classification model to be skewed towards Hamilton as well, attributing text in favour of Hamilton.

With the observation obtained from this section, the optimal conditions for an accurate classification model are one when a set of balance text from each author is used. This principal will be applied in the analysis of the New Testament as majority of the text wrote in the New Testament was attributed to Paul. In section 5.3.1 Results from English Text, it was observed that the accuracy of the classification model is determine by two factors, namely, the number of training data and the feature vector. For a set of feature vector that is relatively small, a set of large training data from each author will help to improve the accuracy of the classification model. Likewise, for a set of feature vectors that is relatively large, a small set of training data is sufficient to give an accurate classification results. Applying this principal to the New Testament text, the approach taken would be to "chop" text, for example, the Gospel of Matthew, into a set smaller text, as shown in Figure 10. This approach was taken by Talis in 2005 and had been proven to be an effective approach in expanding the number of training data.

Results from King James Version

In this section, we will analyse the effects of translation using the New Testament text that had been translated and named as the King James Version. The aim of this test is to identify the effectiveness of the algorithm when dealing with texts that have been translated. The disputed text used in this section is the Gospel of Luke and the Acts of the Apostles. It is widely agreed upon by Biblical scholars that both the Gospel of Luke and the Acts of the Apostle was written by the same person, Luke. In the first test case, the Acts of the Apostles will be used as the training data and the Gospel of Luke labelled as the disputed text. In the second test case, the Gospel of Luke will be taken as the training data and the Acts of the Apostle would be labelled as the disputed text.

As observed in the previous sections, the accuracy of the algorithm increases with a large set of training data. However, the New Testaments itself contain only twenty-seven books, with most of it written by Paul. This poses as a challenge in developing an accurate classification model. To address the issue of a lack of training data, an approach was taken that was similar to Talis, that a long text was broken into several smaller texts as shown in Figure 10. This approach provided a larger sample to help developed an accurate classification model. To ensure that the text was not chopped in the middle of a sentence or in the middle of a paragraph, the chapter number was used as markers to chop the text accordingly.

In analysing the predicted author of the Gospel of Luke, the ranking system in SVM is used. As shown in Figure 11, the predicted author for the Gospel of Luke using the translated Koine Greek text of the New Testaments, King James Version, of the Bible showed that the most likely author is Matthew followed by Mark and Luke. It appears that the Gospel of Matthew and the Gospel of Mark share many similarities in terms of the usage of the function words.

Although the same person, Luke, wrote the Acts of the Apostles and the Gospel of Luke. However, the training data, which includes the Acts of the Apostles, was unable to accurately attribute the Gospel of Luke to itself. This could be due to the stability of the feature vectors that were chosen for attribution or the imposition of the translator style that distorted the original words. However, in analysing the ranking system, the possibility that James, Jude, Paul or Peter could have written the Gospel of Luke was ruled out as these four authors hold the lowest rank in comparison to Matthew, Mark, Luke and John.

In analysing the predicted author of the Acts of the Apostles, the ranking system in SVM is used. As shown in Figure 12, the predicted author for the Acts of the Apostles using the translated Koine Greek text of the New Testaments, King James Version, of the Bible shows that the most likely author is Luke followed by Mark and Matthew. Here in this case, using the Gospel of Luke as the training text in developing the classification model and the Acts of the Apostles as the disputed text, the disputed text was attributed to the rightful author, Luke. This could be due to the feature vector of the Gospel of Luke being more stable as compared to that of the Acts of the Apostles.

In Figure 12, an observation was made that the Gospel of Mark and the Acts of the Apostles are quite similar as Mark would be the second most likely author for the Acts of the Apostles. Another observation was made that both Set A and Set B are quite similar in their predictions, producing similar results or results that differ by rank one. This observation is essential as when analysing the New Testament in Koine Greek, only Set A will be utilized, as the feature vectors in Set B and Set C are limited to English text. Set A is a set of feature vector that is automatically generated using the most frequently occurring words and its word recurrence interval as parameters.

Results from Koine Greek

In this section, we will analyse the effective of the algorithm using the Koine Greek text of the New Testament and a set of feature vector, Set A. The results here will be compared with those obtained in section 5.3.3 Results from King James Version. In using the original text from the author, Koine Greek New Testaments, the imposition of the translator style will be removed. For this section, only Set A, which is the automated function words or feature vector will be used.

As shown in Figure 13, the likely author of the Gospel of Luke was predicted to be Luke or Matthew with Mark holding a rank of three instead. This result is different from that of Figure 11, where a translated version of the Koine Greek text, King James Version, is used. This result shows the effect of translation in producing an accurate result in authorship attribution. Baayen observed the effect that the imposition of editorial or publisher's style can distort the original words of the author, in this case, the imposition of the translator.

In addition, it was observed that the Gospel of Matthew and the Gospel of Luke do share similarities in terms of the set of feature vector that was used to develop the classification model. However, the classification results ruled out that the possibility that the authors for the Gospel of Luke would be James or Paul, which had the lowest rank. With this observation, the training text from James and Paul that were used to develop the classification model was removed as these texts contributed noise to the classification model and distorted the results of the classification. The algorithm was run again; this time without the text from James and Paul, and the results of the classification is shown in Figure 14. This result now shows a more accurate result and it was observed that the likely author of the Gospel of Luke is Luke.

In the next set of result, the Acts of the Apostle was labelled as the disputed text and replaced with the Gospel of Luke as training data from the author Luke. The results from this classification are shown in Figure 15. As observed, the classification model attributed the Acts of the Apostles to Luke with Matthew being the second most likely author. These results differ from that of the translated version of the New Testaments, King James Version, which showed that Mark was one of the likely authors of the Acts of the Apostle. In using the Koine Greek text, it showed that Mark was ruled out as the second likely author of the Acts of the Apostles.

Likewise, in analysing the ranking system, the classification result ruled out the possibility that Paul, Peter, Jude could have been the author of the Acts of the Apostles. The same observation was made that the likely authors for the Acts of the Apostles would be Luke or Matthew.

Hence, in analysing the letter to the Hebrew, the Koine Greek text of the New Testament is required to provide an accurate and conclusive result. This reduces the imposition of the translator style.

Results using Koine Greek for the Letter to Hebrews

In this section, the Letter of Hebrews was labelled as the disputed text. Various texts from the New Testaments were included into the corpus and in addition, the Epistle of Barnabas and the First Epistle by Clement of Rome was included. The corpus used was in beta code, which is a representation of the actual Koine Greek.

As shown in Figure 16, the predicted author of the letter to Hebrews was classified to be Peter or Matthew followed by Paul and thereafter by Mark or Luke. The result in Figure 16 also eliminates the possibility that Barnabas, Clement of Rome or John would be the likely authors of the letter to Hebrews. This result would also eliminate Barnabas and Clement from the list of potential authors that was generated over the years. Barnabas was named as a possible author due to his knowledge of the subject matter in the letter of Hebrews. However, as shown in Figure 16, it was observed that this is highly unlikely.

As the minimum number of text by a particular author limited the training data used in developing the classification model, a strong conclusion could not be formed. However, as shown in the previous results, despite having a small training set, the feature vector, Set A, was still able to make a distinction between similar text and eliminate unlikely authors.

Conclusion

In summary, the optimal conditions for authorship attribution would be when a large number of training data is available to provide an accurate classification of each author. In addition, it is essential that the training data provided is not highly skew to a particular author. A set of balance text from each author is required to prevent a skewing of the classification model and thus resulting in a higher accuracy. It is also noted that the effects of translation or imposition of editorial style can affect the accuracy of the results as observed in the analysis of the Kings James Version and the Koine Greek of the New Testaments.

In the analysis of the New Testament text with the function word analysis algorithm, it is concluded that it is unlikely that Barnabas, Clement of Rome or John, that could be the author of the letter to Hebrews as shown in Figure 16. However, the likelihood that Peter might be the author of the letter to the Hebrews arises with Matthew and Paul.

As shown in the above sections, the usage of function words in authorship attribution is appealing as the occurrence of these words are contributed by the author's authorial style and independent of the content of the writings. One of the majority claims in regards to the authorship of the letter to the Hebrews is the theme of the letter or the content that the author is writing about. For example, Paul was listed as a potential author of the letter to the Hebrews due to his previous writings. Barnabas was listed as a potential author of the letter to the Hebrews due to his knowledge and his close association to the Apostle Paul and Luke for his writing style (Jason G, 1998). However, as known in the results in Figure 16, Barnabas was eliminated as a potential author from the list. Function word analysis is able to provide a non-biased approach, independent on the content of the text, to attribute a disputed text to its rightful author if the optimal conditions are met. Nevertheless, function word analysis is able to eliminate unlikely authors as well with a small set of training data or feature vector.

Function word analysis is also applicable to a wide range of genres as shown in the English text, Federalist papers and the New Testament texts. It is independent of the content of the text making it suitable for authorship attribution, where two authors writing on a similar topic may share many similar words or phrases \[3\]. In addition, function word analysis is applicable to different languages as well, as shown in the implementation of the Koine Greek text. It is also observed that the function word analysis is able to work with text that are relatively short, approximately a thousand words, as shown in the Federalist papers. Function word analysis is computationally inexpensive as well, allowing it to be applied to a vast number of training data without much computational cost.

A future improvement in function word analysis would be the implementation of the probability of occurrence of a function word into the feature vector instead of the portion of function word that appear in a text. Future applications might include the use of function word analysis into authorship attribution in disputed Chinese text. Another future possible implementation of this algorithm would be a search engine that would be able to produce a list of books by a particular author given a text. As the usages of electronic books are becoming ever so popular, it would be relatively simple to find books by an author with only a single chapter of a book as an input parameter.

Word Recurrence Interval

A more statistical approach should be used for authorship detection; hence the data extraction algorithm Word Recurrence Interval (WRI) was chosen. In addition, the method gives the ability to analyse texts regardless of the texts language.

For this algorithm, the WRI is defined as the number of words in between successive occurrences of a keyword. A set of keywords, { x1,......,xk},in the text is selected based on the number of times the keyword appears in the text from smallest to largest. Thereafter a set of scaled standard deviation are obtained from the chosen keywords using the equation below.

In the context of authorship attribution, this algorithm was chosen as it eradicates the dependency of word frequency which characterizes the word distributions, thus utilizing a more statistical approach to the analysis of the text. The obtained set of standard deviations {s1,......, sk} could then be used to directly compare the words between different texts or within the same text itself.

Upon researching on a similar project that was conducted by Putnins et al. [21] , it was concluded that using WRI for data extraction and plotting graphs of scaled standard deviation of WRI vs. log 10 rank does not give satisfactory results. Instead another type of data classification should be incorporated. For this reason, the Support Vector Machine (SVM) was utilized for this project and showed relatively good results in past year research.

Choice of Keywords

The keywords selection for the WRI method is selected base on the number of occurrence of the particular keyword in the text, hence the question arise what will the number of occurrence be. For this reason, the limitation is implemented named as the "threshold", where it specifically defines the minimum number of occurrence of the word for it to be considered as a keyword. Looking at the larger picture, this threshold value is the parameter and an indicator as a depth of the restriction during the process of texts categorization. In other words, a very large threshold value would distinctively and specifically attribute a text to its corresponding author, but may have a good chance to not be considered as the same author for other text.

Choice of Data Dimension

The number of data dimension, which is the number of standard deviations of a text in this scenario, is required for the SVM classification, hence it also plays an important significant towards the automated authorship attribution. The number of data dimension need to be chosen wisely as it affects the number of data corresponding to each text to be inputted into SVM. In other words, a small number of data dimension would provide inadequate data for training purpose hence giving a low accuracy rate in prediction for the disputed texts. However a large number of data dimension will also give a low accuracy rate as found by the team as it will be considered as noise by SVM.

Implementation of Method

The Word Recurrence Interval algorithm was implemented in the programming language Java. During the course of the project, several versions were developed, implemented and shown in Table 13.

| Version Number | Description |

|---|---|

| V 1.0 | Calculate the standard deviations for chosen keywords that are based on the number of occurrence in the text |

| V 2.0 | Keywords are chosen base on 70 function words and its corresponding word interval and standard deviations in the text |

| V 3.0 | Further development from WRI V1.0 with the implemented ability to choose appropriate text for training data |

The effectiveness and establishing the benchmark of the WRI algorithm was evaluated by using the large corpus of English fictional texts as the test data. The team have chosen to use this corpus as it has a large data set and it can also verified the texts are written by its corresponding authors. The first test for the WRI algorithm was to use the first five texts from each author as the training data and two texts from each author as the test data. Initially five data dimension was input and increased every five times. The results were obtained and shown in Appendix G: Additional Word Recurrence Interval Results.

From the result, it could be seen that the algorithm have a low accuracy rate at approximately 60%. Upon deeper investigation and research, it was discovered that the keywords that are chosen were mostly function words, which shows that there is a possibility that WRI combining with function words might gives a better result. This provided the motivation to the team to further develop and enhance the algorithm hence the development on Word Recurrence Interval Version 2.0.

An approach to analyse the text differently with an attempt to improve the accuracy is done by focusing on the keywords that were chosen in the text. This algorithm retains the same calculation and measurement of the word recurrence interval and its corresponding standard deviation, with the difference that the keywords were chosen based on the seventy function words. The seventy function words were used and shown in table 111 as it was proven and identified by Mosteller and Wallace [23] that those function words are good candidates for authorship attribution studies.

| A | Do | Is | Or | This | All | Down | It | Our |

| To | Also | Even | Its | Shall | Up | An | Every | May |

| Should | Upon | And | For | More | So | Was | Any | From |

| Must | Some | Were | Are | Had | My | Such | What | As |

| Has | No | Than | When | At | Have | Not | That | Which |

| Be | Her | Now | Who | Been | His | Of | Their | Will |

| But | If | On | Then | With | By | In | One | There |

| Would | Can | into | Only | Things | your |

Since the keywords were chosen based on the 70 function words as shown above, the algorithm would only have the number of data dimension as its parameter, simplifying the algorithm significantly.

The algorithm is applied to the English texts, the exact same data set which was used as before. The result is obtained and shown in Appendix G: Additional Word Recurrence Interval Results under section 11.7.2 : Additional Results for Word Recurrence Interval Version 2.0.

From the result, the algorithm has an even lower overall accuracy. Furthermore, it loses the consistency which version 1.0 has and the linear property of the result no longer remains. This is an indication saying that the keywords should not only be chosen based on function words, which are not coherence from the findings made by Argamon and Levitan \[24\], where they concluded that content words are not a good indicator for authorship attribution. The reason for this is that there might be a slight possibility that the low accuracy is due to the effect of the different text length of each training data.

With this in mind, the team have reached a conclusion that it is required for WRI to have the ability to choose the training data base on the text length of the data itself, whereby each training data would have approximately the same text length which is implemented in Word Recurrence Interval Version 3.0.

In this algorithm, the extraction of word recurrence interval measurement was used with a modification to the algorithm where it will choose the training data that have the appropriate text length. The idea of the algorithm is to have it only accepts the texts with the limitation of having a standard deviation number between the mean of the whole corpus. This will let the algorithm have the benefit of the automated ability to choose suitable training data based on the average text length of the corpus.

This method is implemented by first calculating the average text length and the standard deviation number for the corpus itself. The range is then calculated and is compared with the texts in the corpus via Equation 11. Therefore, the algorithm will only take in texts that have a text length which lies in the range as the training data's.

The algorithm is applied to the English texts, the exact same data set which was done before. The result is recorded and shown in Appendix G: Additional Word Recurrence Interval Results. The results show that having the ability to select the appropriate data for testing increase the overall accuracy by 10%. Furthermore the algorithm retains the linear property where increasing the number of training data increases the accuracy, with the addition that it also remains the consistency of the results. However, the idea of removal of training data does not seems to be favourable as the training data might be important, motivating the team to continue to use Word Recurrence Interval Version 1.0.

Several tests and outcomes which would be discussed at the next sections are done based on Word Recurrence Interval Version 1.0. Four different corpuses were used to verify the efficiency and performance of the algorithm. The procedure of the tests is to first obtain the threshold and data dimension value which gives the highest accuracy and subsequently use these values on the test data.

Results and Discussion

Results from the English Text

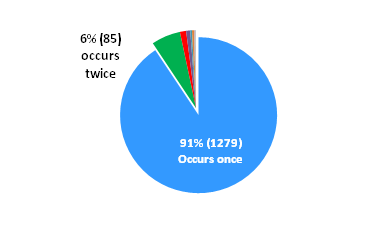

The full corpus that was used in the test contains a total of 156 texts whereby 22 texts are written by each of the six authors. in the results below, the authors are labelled AD, BB, CD, HJ, RD and ZG corresponding to Sir Arthur Conan Doyle, B. M. Bower, Charles Dickens, Henry James, Richard Harding Davis and Zane Grey respectively. It was decided by the team to use 22 texts for training data as the increase amount of training data increases the accuracy as shown in section 11.7.3. Looking at the results, it is clearly seen that the Linear kernel function in SVM gives the best consistency. The prediction accuracy is shown to be proportionally to the number of data dimension for the linear kernel function. The other kernel functions shows a very randomize prediction hence the difficulty in the interpretation of the results. The result is obtained and shown below.